The rise of so-called “smart factory” technologies is leading to a race to modernize manufacturing plant and warehouse floors. Old equipment is being replaced by newer, more advanced machinery as manufacturers look to keep pace with the competition — and wrestle with high turnover rates. According to a survey by Plex Systems, 50% of manufacturers accelerated their adoption of automation and digital systems during the pandemic. A separate report from The Harris Poll, commissioned by Google, found that two-thirds of manufacturers were using AI in their day-to-day operations as of June 2021.

Take those numbers with a grain of salt — they’re not from impartial sources, after all. (Plex sells manufacturing equipment, while Google sells cloud services to industrial customers.) Still, the success of startups like Invisible AI, which uses AI systems to ostensibly optimize factory processes, suggests there’s some semblance of demand out there.

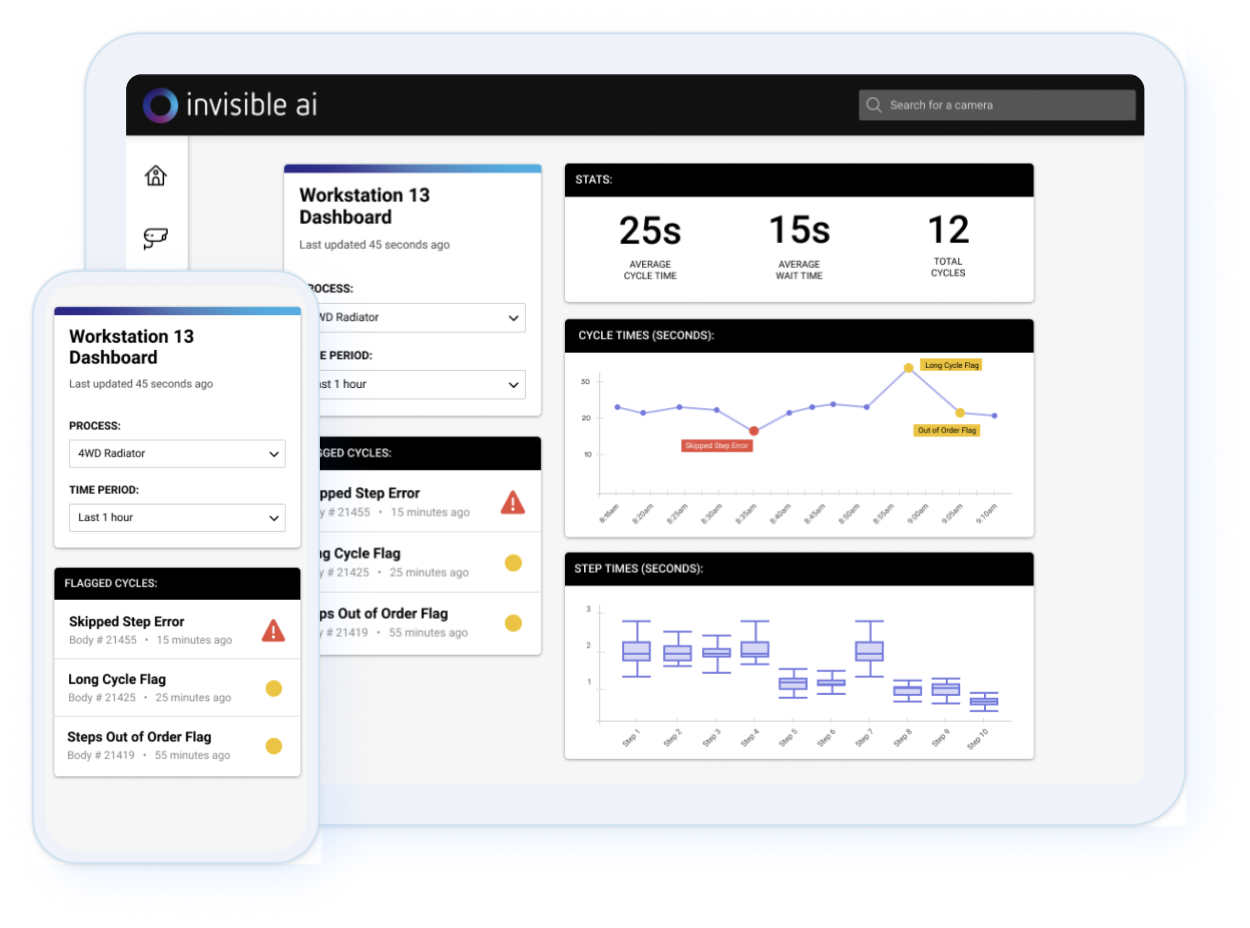

Invisible AI today announced that it raised $15 million for its product that uses cameras and algorithms to track workers’ body movements as they work through assembly processes. CEO Eric Danziger claims that the platform, which gives feedback to operators as they work, is already being used in eight facilities, including some owned by Toyota’s North America division, with an additional eight deployments planned over the next six months.

“Some of the biggest problems that can be solved today with state-of-the-art AI are within manufacturing, where safety, quality and productivity are paramount,” Danziger told TechCrunch in an email interview. “Everything done in manufacturing, from safety audits to continuous improvement cycles, is still based on manual data collection using stop watches and clipboards. We are building intelligent solutions that can help customers optimize their assembly operations.”

Danziger co-founded Invisible AI in late 2018 with Prateek Sachdeva, who he met while working at lidar sensor startup Luminar. The two envisioned using thousands of AI-enabled cameras in manufacturing facilities to monitor workers and make sense of large environments and objects, including moving conveyors and the autonomous vehicles that carry pallets from place to place.

Invisible AI’s technology can track workers’ movements out of the box without an internet connection. Leveraging edge computers and stereo cameras, the combination hardware-software platform has a notion of depth, enabling it to detect potential safety incidents (e.g. high-stress injuries) and quality issues across different assembly lines.

Danziger claims that Invisible AI can track any custom body motion or physical activity, or perform searches across a factory for things like product IDs. One recent new app deployed to the platform allows customers to track the movements of forklifts on the facility floor.

“We want to build solutions that use cutting-edge AI tech but are deployable in minutes without any coding or data collection — it needs to just work for scale and adoption,” he added. “It all starts with visibility.”

Workers might rightly wonder about the privacy implications. While cameras are common in factories, persistent individual tracking is not necessarily.

In the worst case, the tech could be co-opted for decidedly invasive purposes, for example enabling managers to chastise employees in the name of productivity. Some companies already use algorithms to do this, albeit not cameras necessarily. Amazon’s notorious “Time Off Task” system dings warehouse employees for spending too much time away from the work they’re assigned to perform, like scanning barcodes or sorting products into bins.

Unsurprisingly, Danziger was adamant that Invisible AI doesn’t condone these use cases. He noted the platform can’t currently perform facial recognition and has optional — emphasis on optional — real-time face blurring capabilities for “customers who are incredibly sensitive on this topic.”

“The best part about our system is that it is entirely edge-based and all video processing and storage is inside each camera, and only leaves the camera when an end-user is watching video using the web interface,” Danziger said. “One hundred percent of customer data is within their firewalls, which massively reduces all security risks to this sensitive video data.”

There’s nothing preventing customers from using Invisible AI for surveillance, however — and U.S. laws wouldn’t prevent this either. Each state has its own legislation pertaining to surveillance, but most give wide discretion to employers so long as the equipment they use to track employees is plainly visible (with the exception of California’s AB-701). There’s also no federal legislation that explicitly prohibits companies from monitoring their staff during the workday.

However we might feel about that fact, Invisible AI’s business is expanding — rivaling the growth of competitors like Everguard, Intenseye and Protext AI. Danziger says that Invisible AI has 10 customers across markets like automotive, aerospace and agriculture, with dozens of users per deployment, and is applying for both military and government contracts.

“Business initially slowed down during the pandemic, but the burden of increased product demand and the many labor shortage issues has yielded in increased demand for our product,” Danziger said. “We have incredible customer demand and we are trying to meet that with this funding round now.”

Van Tuyl Companies led Invisible AI’s Series A with participation from FM Capital, 8VC, Sierra Ventures, K9 Ventures and Vest Coast Capital. It brings the 20-employee startup’s total raised to $21 million; Invisible AI plans to hire 10 staffers by the end of the year.

Comment