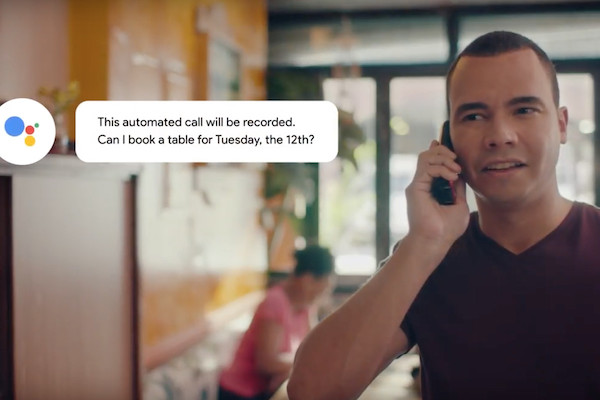

Remember Natural Language Processing? NLP arose several years ago but it was only in 2018 that AI researchers proved it was possible to train a neural network once on a large amount of data and use it again and again for different tasks. In 2019 GPT-2 from Open AI, and T5 by Google appeared, showing that they were startlingly good (it’s now been incorporated into Google Duplex, pictured). Concerns were even raised about their possible misuse.

But since then, things have gone, well, pretty exponential.

Last year saw a veritable ’Cambrian explosion’ of NLP startups and Large Language Models.

This year, Google released LambDa, a large language model for chatbot applications. Then Deepmind released Alpha Code then later Flamingo — a language model capable of visual understanding. In July of this year alone, the Big Science project released Bloom, a massive open source language model, and Meta announced that they’d trained a single language model capable of translation between 200 languages.

We are now reaching a sort of tipping point where we will see many more commercial applications of NLP — some using some of these open source, publicly available platforms — hit the market. You could almost say a gold rush has begun of startups trying to build on this technology, with an arms race developing between the large language model providers.

One of those startups is Humanloop, a University College AI spinout which claims to make it “significantly” easier for companies to adopt this new wave of NLP technology via a suite of tools which helps humans ‘teach’ AI algorithms. This means a lawyer, doctor or banker can put into the platform a piece of knowledge which the software then applies at scale across a large data set, allowing a broader application of AI to various industries.

It’s now pulled in a $2.6 million seed funding round led by Index Ventures, with participation by Y Combinator, Local Globe and Albion.

Founded in 2020 by a team of preeminent computer scientists from UCL and Cambridge, and alumni of Google and Amazon, Humanloop’s applications, it says, might include building a picture of a national real estate market from unstructured data on the internet; reading through electronic health records to identify people who could be candidates to try new therapies; and even moderating comments on Facebook groups.

“People would be shocked if they knew what language-based AI was capable of now,” says CEO Raza Habib in a statement. “But getting the data into a form that the algorithm can use is the biggest challenge. With Humanloop, we want to democratize access to AI and enable the next generation of intelligent, self-serve applications — by allowing any company to take its domain expertise and distill it efficiently in a machine learning model.”

Humanloop claims its success is the growth of ‘probabilistic deep learning’, where algorithms can work out what they don’t know, by tuning out the noise in data sets, finding the good stuff and asking humans for help with the parts they don’t understand.

Other startups building their own large language models and putting them behind APIs include Cohere AI ($164.9 million in funding) and Open AI GPT-3. Snorkel AI ($135.3 million in funding) is also a new startup in this arena.

However, Humanloop says it is less focused on developing the models and more on the tools needed to adapt them to specific use cases.

“What many people don’t know is that it’s not the lack of appropriate algorithms that’s holding back AI from being ubiquitous in every workplace — it’s the absence of properly labelled data,” adds Erin Price-Wright, the partner at Index Ventures who led the investment. “In fact, machine learning itself is becoming increasingly commoditized and off the shelf, but it’s still really hard for non-technical people to transmit their knowledge to a machine and help the algorithm refine its model.” Hence why Humanloop allows people to tweak the data.

If the NLP gold rush is indeed on its way, expect a whole bunch of other startups to appear soon.

Comment