Orbital imagery is one of the hot categories of the new space industry, but there’s more to it than meets the (human) eye. Pixxel has raised $25 million to launch a constellation of satellites that will provide hyperspectral imagery of the Earth: a wider slice of the electromagnetic spectrum that can reveal all kinds of details not visible to ordinary cameras.

Fundamentally, the ability to look down from miles above the planet’s surface offers all kinds of opportunities. But just as you need more than a basic digital camera in a lab, so it is with orbital imagery.

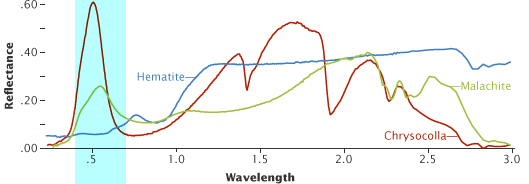

One additional tool you might find in a lab is a spectrometer, which blasts an object or substance with radiation, recording which frequencies are absorbed or reflected, and how much. Everything has a different spectral signature, meaning even closely related materials — for instance, two types of the same mineral — can be distinguished from each other.

Hyperspectral imagery is a similar process in camera form, and doing it from space lets you find the spectral signatures of an entire region in a single picture. NASA and other agencies do it for planetary observation purposes, and now Pixxel is building on the work it’s done to launch a constellation of satellites that will provide hyperspectral coverage on demand.

Founder and CEO Awais Ahmed said that, as with other nascent space industries, a combination of shrinking tech and frequent, inexpensive launches made the business possible. He candidly admitted that NASA walked so Pixxel could run, but it isn’t just repurposing taxpayer-funded tech. You could think of the EO-1 mission and Hyperion hyperspectral dataset as early market research.

“Hyperion is about 30 meters [per pixel] or so in resolution, which is great for scientific purposes. But you need to get down to 5 meters or so — otherwise, it doesn’t make sense for what we’re doing,” Ahmed explained.

The Pixxel constellation, though it will not exactly be numerous at six satellites (three launching later this year and three more early next), will be able to provide that 5-meter resolution over much of the Earth about every 48 hours. There’s already a test satellite up there sending back sample imagery, and a second-generation bird will go up next month. The production versions are larger and have more gear inside to improve the quality and quantity of images taken.

Ahmed said the company already has dozens of customers lined up for the data it will eventually provide, if not the imagery already coming down from the test satellites. These companies tend to be in the agriculture, mining, and oil and gas industries — where regular surveys of large tracts of land are important to ongoing operations.

The 5-meter resolution comes into play here, as there are features that occur on a small scale that would be lost or averaged out on a larger one. If you’re mapping a continent, 30-meter resolution is overkill, but if you’re checking the margins of a lake for harmful chemicals or a field for dehydration, you want to get as exact as you can.

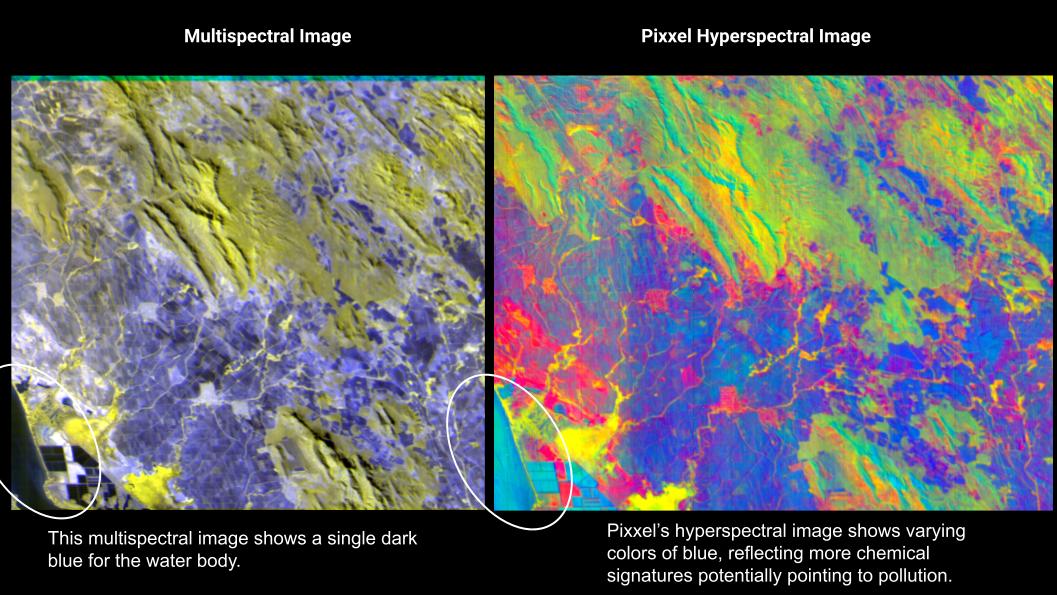

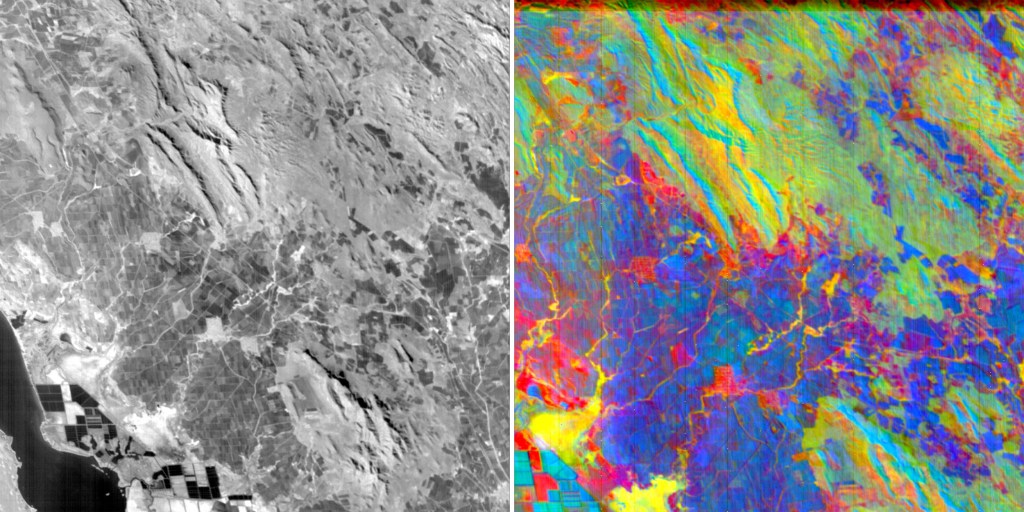

Hyperspectral imagery also reveals more, as visible light will pass right through emissions like methane, or show a similar color for two very different materials. If the lake has dark discoloration on the edge, is that algae, a shelf under the surface, or industrial runoff? Hard to tell when it’s just “blue” and “dark blue.” But hyperspectral images cover far more of the spectrum, producing a rich image that is hard for humans to intuitively understand. Just as birds and bees can see ultraviolet and it changes their perception of the world, it’s hard for us to imagine what the world would look like if we could see in the 1,900-nanometer wavelength.

Just as a simple example showing the scale here, this chart from NASA shows the spectral signatures of three minerals from 0 to 3,000 nanometers of wavelength; I’ve roughly highlighted the portion visible to human vision in blue:

As you can see, there’s a lot left on the table.

“We have hundreds of colors to play with. It helps you see, in the soil with a specific nutrient, is it over-saturated or under? Each of these manifests as a minor change in that smooth spectrum in hyperspectral imagery. But it’s invisible in RGB,” said Ahmed.

Pixxel’s sensors collect several hundred “slices” of the spectrum, whereas ordinary cameras really only capture three: red, blue and green. For comparison, Planet’s satellites have a handful of additional useful slices, creating what’s called multispectral imagery, which is better than plain RGB. But when you put together dozens or hundreds of slices, you get a more complex and representative picture (and at some point you start calling it hyperspectral instead). In the chart above, more slices mean the curves are more precise and likely more accurate.

While there are other companies out there pursuing hyperspectral orbital imagery, none has launched a working satellite currently sending back data, nor have they achieved the 5-meter resolution and range of spectrum slices Pixxel is doing. So while there will likely be competition in the space eventually, this constellation will likely be out in front.

“The quality of our data is the best — and a bonus is we’re doing it in a much more inexpensive way,” said Ahmed. “We’re fully funded through the first constellation.”

The $25 million Series A was led by Radical Ventures, with participation from Jordan Noone, Seraphim Space Investment Trust Plc, Lightspeed Partners, Blume Ventures, and Sparta LLC.

The money will of course go toward building and launching the satellites, but Pixxel is also working on a software platform so customers don’t have to build a hyperspectral analysis stack from scratch. They can’t just repurpose what they’ve got — there’s literally never been data like this available before. So Pixxel is building “a generalized platform with built-in models and analysis,” said Ahmed. It’s not quite ready to show publicly, though.

Pixxel’s commercial service should be operational in Q1 or Q2 of 2023, pending the usual space-related uncertainties.

Comment