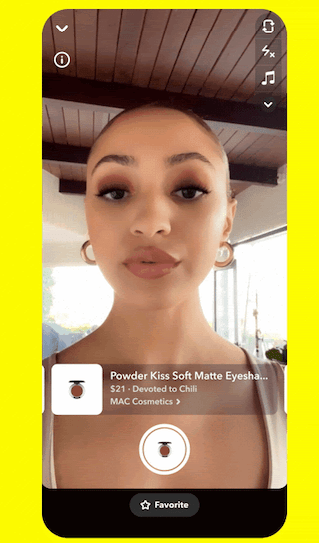

Snapchat is upgrading its AR shopping experience today with updates to both to the Shopping Lenses inside its social app as well as to the analytics shared with Snap’s brand and retail partners. For consumers, the AR shopping features become more practical to use, as they’ll now display key product information, updated in real-time, like the current product pricing and color details, alongside product descriptions and unique links to make purchases on new Lens Product Cards that appear as users virtually try on products.

For brands, the updates are focused on providing more data about how their AR shopping features are performing.

These analytics are now provided in real time as the AR Shopping Lenses are linked directly to the company’s product catalog, Snap explains. This allows brands and marketers to gain more immediate insights that can help them with their R&D plans, as well as to determine which types of products are resonating best with Snapchat’s younger, Gen Z and millennial audience. Brands can then incorporate that data when making decisions about ad targeting and future product development. Snap notes the data can also help the brands optimize the delivery of their Shopping Lens to the app’s users who are most likely to make a purchase.

Before today’s public launch, Snap beta-tested the new AR Lens with a handful of brands, including Ulta Beauty and MAC Cosmetics. Using these “catalog-powered” Shopping Lenses, as they’re called, Ulta reported $6 million in incremental purchases on Snapchat and over 30 million product try-ons within a two-week time period. MAC, meanwhile, saw 1.3 million try-ons at a cost of 0.31 cents per product trial and reported a 17x higher lift in purchases among women, 2.4x lift in brand awareness and 9x light in purchase intent.

Users can swipe and use gestures to move through different AR product options when shopping with AR, in order to see what makeup, clothing, jewelry and accessories would look like on themselves. The experience is meant to offer a tech-powered alternative to trying on items in a retailer’s store, which serves a growing number of brands that are online-only without a brick-and-mortar footprint, as well as traditional retailers looking to reach a younger audience that frequently shops online.

Snap is also making the AR Shopping Lenses easier to create. Although it launched a free web creation tool last year for brands building AR experiences, it hadn’t included easy-to-use AR shopping templates. Now, the company promises AR Shopping Lens creation can be accomplished in just two minutes with a few clicks. This is made possible through an update to the Lens Web Builder, but initially, only for beauty brands. In the months ahead, Snap will offer similar updates to other shopping categories. In the meantime, any brand can continue to use Snap’s Lens Studio to build their AR lenses.

The company has been heavily investing in its AR shopping business in recent months, with the goal of making shopping a deeper part of the overall Snapchat experience. Last year, it upgraded its computer vision-powered Scan product, which lets users “scan” an item with their phone’s camera to learn more about the product — like an article of clothing. The feature can also be used to scan ingredients, then be connected to recipes where they can be used. It also spent $124 million to acquire Fit Analytics, a startup that helps consumers find from online retailers clothes and shoes that properly fit. Last October, Snap also announced the launch of a creative studio, Arcadia, which helps commercialize its AR tech further by helping brands develop AR experiences that can be used across platforms, including web and apps outside of Snapchat itself.

AR try-on seems to resonate with Snap’s younger demographic, according to data Snap has shared. In a beta test with 30+ brands, Snapchat users virtually tried on products over 250 million times. AR try-on has also led to 2.4x higher purchase intent and a +14% sales lift over video spend, the data indicates. Including non-shopping AR features, over 200 million Snapchat users engage with AR on the app daily.

“Augmented reality is changing the way we shop, play, and learn, and transforming how businesses tell their stories and sell their products,” said Snap’s chief business officer, Jeremi Gorman, in a statement. “Starting today, our revamped AR Shopping Lenses will mean a more engaging experience for our Snapchat community, and enable a faster, easier way to build Lenses for businesses — directly linking Lenses to existing product catalogs for real-time analytics and R&D about which products resonate with Gen Z and Millennial audiences.”

The AR improvements could also give Snap a way to better compete with larger social media companies, which haven’t as heavily focused on AR-enabled shopping in favor of other experiences, like live video shopping, influencer marketing powered by brand deals, and in-app shopping experiences which allow users to transact from within the social app they’re using, like Shop on Instagram. And Snap also hopes its Lens targeting features will help the company boost its ad revenues — figures that have been impacted by Apple’s new privacy features, causing Snap to miss on Wall Street’s revenue expectations during last quarter’s earnings. It brought in $1.07 billion in revenue in Q3 2021, versus the $1.10 billion forecast, sending the stock down 22% after earnings were reported. Snap will report its Q4 and full-year 2021 earnings on February 3, 2022.

Brands considering a live-shopping strategy must lean on influencers

Comment