The Cinematic Mode on the iPhone 13 Pro models had a marquee spot in Apple’s presentation about the devices last week. The reviews so far this week have people acknowledging the cleverness but questioning its usefulness.

I’ve been testing out the feature for the past week and this weekend took it to Disneyland to give it a real-world rundown in a way that thousands or even millions of people might do over the next few years. Aside from my personal testing, some of which I’ll talk about here and more about which you can find in my iPhone review here, I wanted to dig a bit deeper.

So I spoke to Kaiann Drance, VP, Worldwide iPhone Product Marketing, and Johnnie Manzari, a designer on Apple’s Human Interface Team, about the goals and creation of the feature.

“We knew that bringing a high-quality depth of field to video would be magnitudes more challenging [than Portrait Mode],” says Drance. “Unlike photos, video is designed to move as the person filming, including hand shake. And that meant we would need even higher-quality depth data so Cinematic Mode could work across subjects, people, pets and objects, and we needed that depth data continuously to keep up with every frame. Rendering these autofocus changes in real time is a heavy computational workload.”

The A15 Bionic and Neural Engine are heavily used in Cinematic Mode, especially given that they wanted to encode it in Dolby Vision HDR as well. They also didn’t want to sacrifice live preview — something that most Portrait Mode competitors took years to ship after Apple introduced it.

But the concept of Cinematic Mode didn’t start with the feature itself, says Manzari. In fact, he says, it’s typically the opposite inside of this design team at Apple.

“We didn’t have an idea [for Cinematic Mode]. We were just curious — what is it about filmmaking that’s been timeless? And that kind of leads down this interesting road and then we started to learn more and talk more … with people across the company that can help us solve these problems.”

Drance says that before development began, Apple’s design team spent time researching cinematography techniques for realistic focus transitions and optical characteristics.

“When you look at the design process,” says Manzari, “we begin with a deep reverence and respect for image and filmmaking through history. We’re fascinated with questions like what principles of image and filmmaking are timeless? What craft has endured culturally and why?”

Even when Apple decides to deviate from the classical techniques, Manzari says, they try to make those decisions thoughtfully and respectfully in regards to the original context. The team focuses on finding a way to create something that removes complexity and unlocks potential for people by leveraging Apple’s design and engineering capacity.

In the process of developing the Portrait Lighting feature, Apple’s design team went on an exploration of classic portrait artists like Avedon and Warhol and painters like Rembrandt and Chinese brush portraits — in many cases going to visit the original pieces and breaking down those characteristics in the lab. A similar process was used to develop Cinematic Mode.

The first thing that the team did was go speak to some of the best cinematographers and camera operators in the world. They also went to movies and watched examples of films through time.

“In doing this, certain trends emerge,” says Manzari. “It was obvious that focus and focus changes were fundamental storytelling tools, and that we as a cross-functional team needed to understand precisely how and when they were used.”

They then worked closely with directors of photography, camera operators and 1st ACs, whose responsibilities include focus pulling. Observing them on set and asking questions.

“It was also just really inspiring to be able to talk to cinematographers about why they use shallow depth of field. And what purpose it serves in the storytelling. And the thing that we walked away with is, and this is actually a quite timeless insight: You need to guide the viewer’s attention.”

“Now the problem is that today, this is for skilled professionals,” Manzari notes. “This is not something that a normal person would even attempt to take on, because it is so hard. A single mistake — being off by a few inches … this was something we learned from Portrait Mode. If you’re on the ear and you’re not on their eyes. It’s throwaway.”

That’s not even counting tracking shots, where a focus puller is continually adjusting focus as the camera moves and even the subject moves in relation to the camera. It’s a highly skilled operation. To pull off a tracking shot, a focus puller must practice and train extensively for years. This, Manzari says, is where Apple sees an opportunity.

“We feel like this is the kind of thing that Apple tackles the best. To take something difficult and conventionally hard to learn, and then turn it into something automatic and simple.”

So the team started working through the technical problems in finding focus, locking focus and racking focus. And these explorations led them to gaze.

“In cinema, the role of gaze and body movement to direct that story is so fundamental. And as humans we naturally do this, if you look at something, I look at it too.”

So they knew they would need to build in gaze detection to help lead their focusing target around the frame, which in turn leads the viewer through the story. Being on set, Manzari says, allowed Apple to observe these highly skilled technicians and then build in that feel.

“We’re on set and we have all these amazing people and they’re really the best of the best. And one of the engineers noticed that the focus puller has this focus control wheel, and he’s just studying the way that this person does this. Just like when you look at like someone who’s really good at playing the piano, and it looks so easy, and yet you know it’s impossible. There’s no way you’re going to be able to do this,” says Manzari.

“This person is an artist, this person is so good at what they do and the craft they put into it. And so we spent a lot of time trying to model the analog feel of a focus wheel turning.”

This included the way that long distances of focus change are covered differently than short distances because of the way that the speed of handling a focus wheel ramps up and down. If, he notes, the focus changes don’t feel deliberate and natural, you don’t end up with a storytelling tool. Because a storytelling tool should feel invisible. If you’re watching a movie and notice a focus technique it’s probably because it’s soft and the focus puller missed their mark (or an actor did).

In the end, a lot of these artistic and technical desires that the team came away from their explorations with became really challenging machine learning problems. Thankfully, Apple has a team of machine learning researchers and a silicon team that built the Neural Engine on hand to collaborate with. Some of the problems contained inside the Cinematic Mode are genuinely new and unique ML problems. Many of them ended up being fairly thorny, involving open-endedness techniques to keep the effects nuanced and organic feeling.

Testing Cinematic Mode

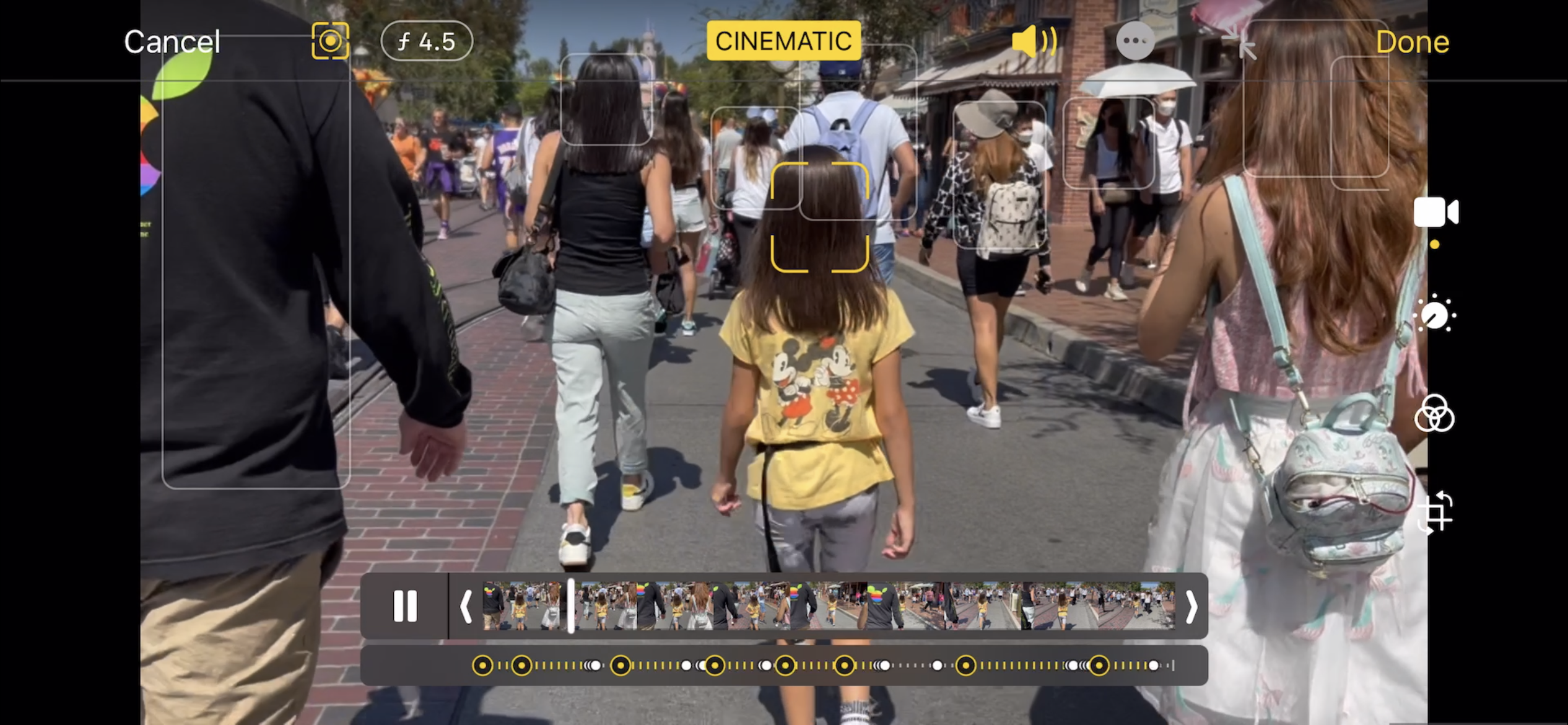

My aim in my tests was to shoot what I could in one day (and a bit of an afternoon at the pool) just like anyone going to Disneyland would hope to do. One person holding the camera, no setup and very little direction. Now and then I asked a kid to look at me. That’s about it. What you see in this reel is as close as possible to what you would experience doing this yourself, which is the whole point. There isn’t a bunch of b-roll, I didn’t re-shoot this stuff over and over. What you see is what was shot. The only editing that I did here was using Cinematic Mode to pick some points of focus after the fact, either for effect or because the automatic detection chose something I didn’t like. I didn’t have to do a lot of that but I was happy I was able to. If you can’t view the demo reel, click here.

This footage is not perfect by any means, and neither is Cinematic Mode. The synthetic bokeh (lens blur) that Apple has gotten so good at with Portrait Mode absolutely suffers from having to be performed so many times per second. The focus tracking can still be a bit jumpy too — making post-shooting editing far more common than it is probably intended to be. And though I found that it does work just fine in low-light settings, it’s best if you’re within range of the lidar array (about 10 feet or less) if you want accurate results.

But you can see what they are after and where it is headed. And I found it absolutely usable and fun right now. I know a lot of reviews kind of breezed over it but I think that artificially testing this kind of new thing is a rough way to interpret how it will work for the normal person. It’s one of the reasons I started testing iPhones at Disneyland in 2014. We were exiting the speeds and feeds era with haste as the iPhone began to be used by millions of people — strapping it to a dyno to test the ol’ HP just wasn’t an important thing to do any more.

I’m not all that shocked that an artificial testing framework caused a lot of early reviewers to see primarily flaws (they are there!) but I see a lot more potential.

What it is

Cinematic Mode is actually a bundle of functions that exist in a new section of the camera app. It leverages nearly every major component of the iPhone to do its thing. It utilizes the CPU and GPU, of course, but also Apple’s Neural Engine for machine learning work, accelerometers for tracking and motion and of course the upgraded wide-angle lens and stabilized sensor.

Some of the individual components that make up Cinematic Mode include:

- Subject recognition and tracking.

- Focus locking.

- Rack focusing (moving focus from one subject to another in an organic-looking way).

- Image overscan and in-camera stabilization.

- Synthetic bokeh (lens blur).

- A post-shot editing mode that lets you alter your focus points even after shooting.

And all of those things are happening in real time.

The way it works

The processing power to do all of this in a real-time preview and in post edits and 30 times per second is intense, to say the least. This is why you see those big leaps forward in performance in the Neural Engine and massive leaps in GPU in Apple’s A15 chips. It’s needed to pull stuff like this off. What’s crazy is that I didn’t really notice any appreciable hit in battery life even though I played with the mode a lot throughout the day. Once again Apple’s power-per-watt work in evidence.

Even while you’re shooting, the power is evident as the live preview gives you a pretty damn accurate view of what you’re going to see. And while you shoot, the iPhone is using signals from your accelerometer to predict whether you’re moving toward or away from the subject that it has locked onto so that it can quickly adjust focus for you.

At the same time it is using the power of “gaze.”

This gaze detection can predict which subject you might want to move to next and if one person in your scene looks at another or at an object in the field, the system can automatically rack focus to that subject.

Because Apple already overscans the sensor for stabilization — effectively looking “beyond the edges” of your frame — the design team found that they could utilize this for subject prediction as well.

“A focus puller doesn’t wait for the subject to be fully framed before doing the rack, they’re anticipating and they’ve started the rack,” notes Manzari, “before the person’s even there. And we realize that by running the full sensor we can anticipate that motion. And, by the time the person has shown up, it’s already focused on them.”

You can see this in one of the later clips in my video above, where my daughter enters the frame bottom left already in focus, as if an invisible focus puller was anticipating her entering the scene and drawing the viewer’s attention there — to the new entry into the story.

And even after you shoot, you can correct the focus points or make creative decisions.

One cool thing about the post-shooting focus selection is that because the lenses in iPhones are so small, they naturally have an extremely deep field of focus available to them (hence the synthetic bokeh of Portrait and Cinematic Mode). This means that unless you are extremely close to an object, anything in the frame is available for you to pick from to focus on. The changes are then made in real time using the depth information and segmentation masking that Cinematic Mode carries along with every video shot to regenerate the synthetic lens blur.

https://twitter.com/panzer/status/1441036963587375107?s=20

In my review of the iPhone 13 Pro I said this about Cinematic Mode:

Despite the marketing, this mode is intended to unlock new creative possibilities for the vast majority of iPhone users who have no idea how to set focal distances, bend their knees to stabilize and crouch-walk-rack-focus their way to these kinds of tracking shots. It really does open up a big bucket that was just inaccessible before. And in many cases I think that those willing to experiment and deal with its near-term foibles will be rewarded with some great-looking shots to add to their iPhone memories widget.

I don’t care what filmmakers Apple brings in to demo the feature — I do not actually believe that those people who are the most capable with a camera are the ones that stand to gain the most from the feature. Instead, it is the rest of us that have a hand free if we’re lucky and some basic desire to capture the feeling of what it was like to be there — sometimes instead of the harsh reality.

And that’s the power of the language of cinema: transportation. Though it’s far from perfect in this initial iteration, Cinematic Mode gives “normal people” a toolkit to build a doorway into that world in a way that’s far easier and far more accessible than it has been in the past.

For now, there’s lots to complain about if you’re staring closely. But also lots to love if you’ve got one shot to get your kid’s reaction to seeing Kylo Ren in the flesh for the first time. And it’s hard to argue against accessibility of these tools just because they aren’t yet perfect.

“One thing that just makes me so proud is when somebody comes to me and they show me their photos … and they are so proud of what they’ve captured, and they’re just beaming because they all of a sudden feel like I’m creative! I didn’t even go to art school, I’m not a designer. No one ever thought of me as a photographer, but my photos look amazing,” says Manzari.

“Cinema kind of showed us the range of human emotion and the range of human stories and that if you get the fundamentals right they can be communicated. And life’s unfolding with your phone right on you. We’ve been working really hard on this for a long time. I can’t wait to see customers get their hands on it.”

Comment