Research papers come out far too rapidly for anyone to read them all, especially in the field of machine learning, which now affects (and produces papers in) practically every industry and company. This column aims to collect the most relevant recent discoveries and papers — particularly in but not limited to artificial intelligence — and explain why they matter.

The topics in this week’s Deep Science column are a real grab bag that range from planetary science to whale tracking. There are also some interesting insights from tracking how social media is used and some work that attempts to shift computer vision systems closer to human perception (good luck with that).

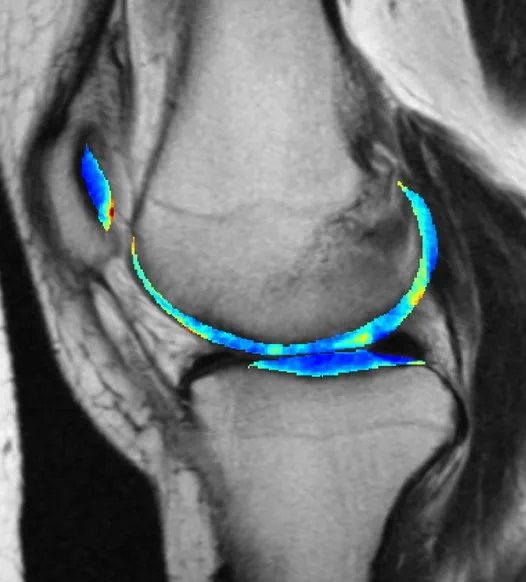

ML model detects arthritis early

One of machine learning’s most reliable use cases is training a model on a target pattern, say a particular shape or radio signal, and setting it loose on a huge body of noisy data to find possible hits that humans might struggle to perceive. This has proven useful in the medical field, where early indications of serious conditions can be spotted with enough confidence to recommend further testing.

This arthritis detection model looks at X-rays, same as doctors who do that kind of work. But by the time it’s visible to human perception, the damage is already done. A long-running project tracking thousands of people for seven years made for a great training set, making the nearly imperceptible early signs of osteoarthritis visible to the AI model, which predicted it with 78% accuracy three years out.

The bad news is that knowing early doesn’t necessarily mean it can be avoided, as there’s no effective treatment. But that knowledge can be put to other uses — for example, much more effective testing of potential treatments. “Instead of recruiting 10,000 people and following them for 10 years, we can just enroll 50 people who we know are going to be getting osteoarthritis … Then we can give them the experimental drug and see whether it stops the disease from developing,” said co-author Kenneth Urish. The study appeared in PNAS.

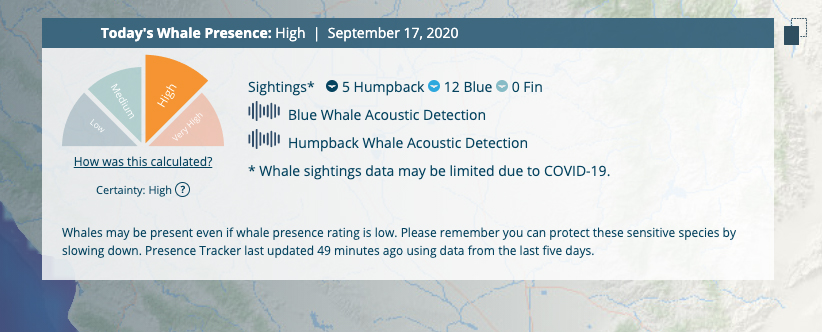

Using acoustic monitoring to preemptively save the whales

It’s amazing to think that ships still collide with and kill large whales on a regular basis, but it’s true. Voluntary speed reductions haven’t been much help, but a smart, multisource system called Whale Safe is being put in play in the Santa Barbara channel that could hopefully give everyone a better idea of where the creatures are in real-time.

The system uses underwater acoustic monitoring, near-real-time forecasting of likely feeding areas, actual sightings and a dash of machine learning (to identify whale calls quickly) to produce a prediction for whale presence along a given course. Large container ships can then make small adjustments well-ahead of time instead of trying to avoid a pod at the last minute.

“Predictive models like this give us a clue for what lies ahead, much like a daily weather forecast,” said Briana Abrahms, who led the effort from the University of Washington. “We’re harnessing the best and most current data to understand what habitats whales use in the ocean, and therefore where whales are most likely to be as their habitats shift on a daily basis.”

Incidentally, Salesforce founder Marc Benioff and his wife Lynne helped establish the UC Santa Barbara center that made this possible.

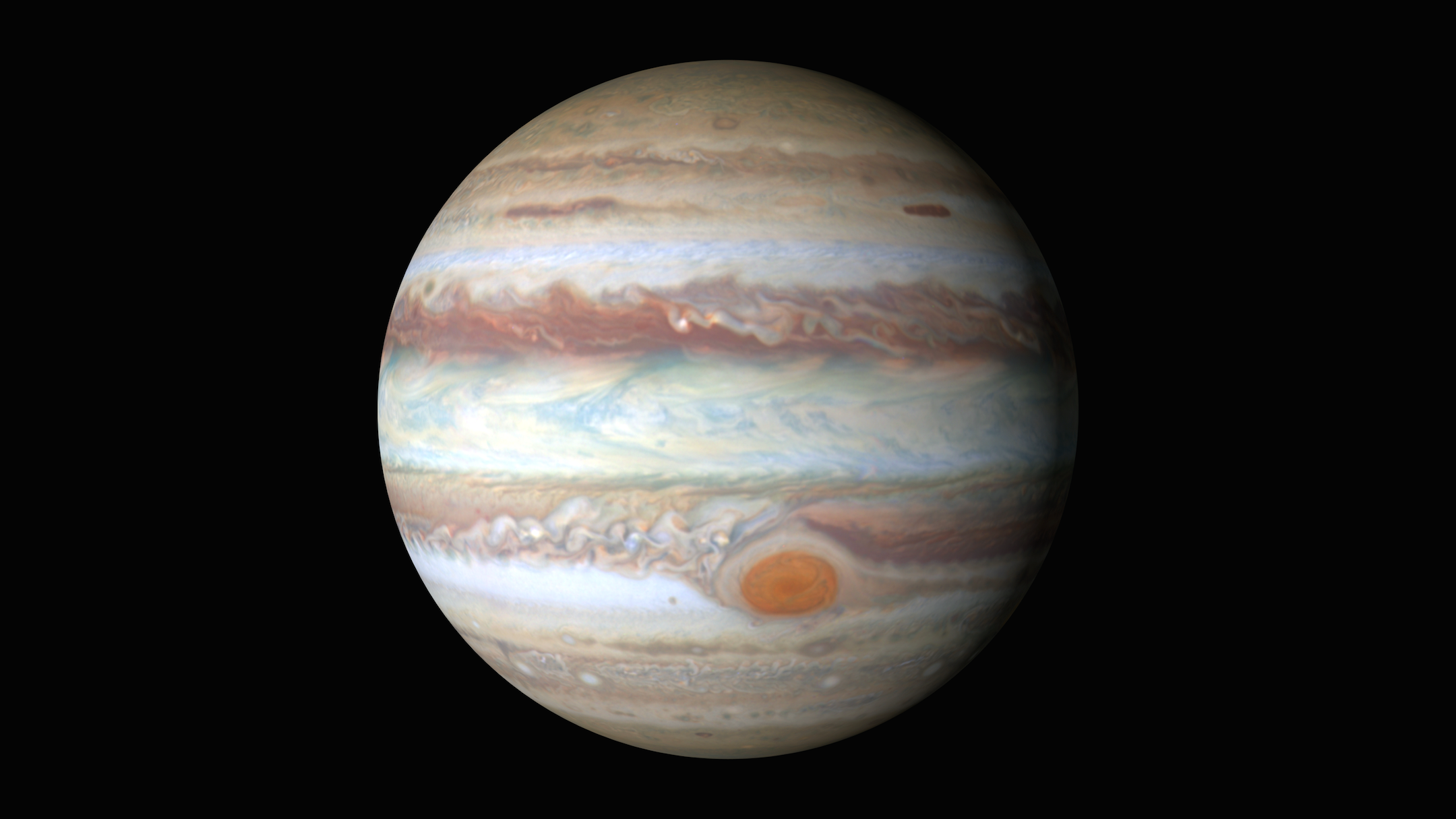

The fastest way to a gas giant’s heart

The planets known as gas giants are largely made up of hydrogen, mostly liquid but becoming metallic as you delve deeper into their cores. We don’t know exactly how it works because, of course, no one’s been down there, and it’s rather difficult to achieve those pressures and temperatures here on Earth (they’ve tried with something called a diamond anvil, which sounds very serious). So we’re stuck with theory — and simulation. But accurate simulation of these conditions is so demanding that it has been limited to a handful of atoms over a handful of nanoseconds.

The latest supercomputers and machine-learning techniques can do better. A collaboration between EPFL, Cambridge and IBM, published in Nature, used ML predictions in lieu of direct simulation of quantum mechanics, simplifying calculations but still (they believe) producing an accurate model. The results suggest a gradual transition rather than a sudden one, counter to prevailing theory that the hydrogen at some point switches from one phase to another, like water boiling.

The calculations took weeks on EPFL’s supercomputers, but as head of the lab Michele Ceriotti said, “if we’d tried to run models developed the conventional way, it would’ve taken hundreds of millions of years.”

A little bird told me (you’re trash talking CDC guidance)

Twitter has repeatedly been likened to a firehose of information — mostly worthless, but a firehose nonetheless. How to make sense of it? Plenty of companies out there do it for a living, and Pacific Northwest National Lab is doing it to track the coronavirus response. “Although Twitter is not representative, it still provides a valuable insight into public perspectives toward different policies,” said PNNL’s Maria Glenski. So the lab has been using a tool called WatchOwl to identify and analyze millions of tweets per day touching on the pandemic.

It’s not a live measure of anything — the data is months old — but natural language analysis reveals things like positivity and negativity, the direction of spread of a sentiment or idea, and how those things interact with real-world events like announcements and major gatherings. How did marches in protest of George Floyd’s death affect nationwide discussion of mask-wearing? Did a lack of masks at the Republican National Convention prompt censure — and if so, where? This sort of thing is (in a way sadly) best observed in hindsight, and the PNNL team hopes that a better model for public response may emerge from seeing the real thing unfold.

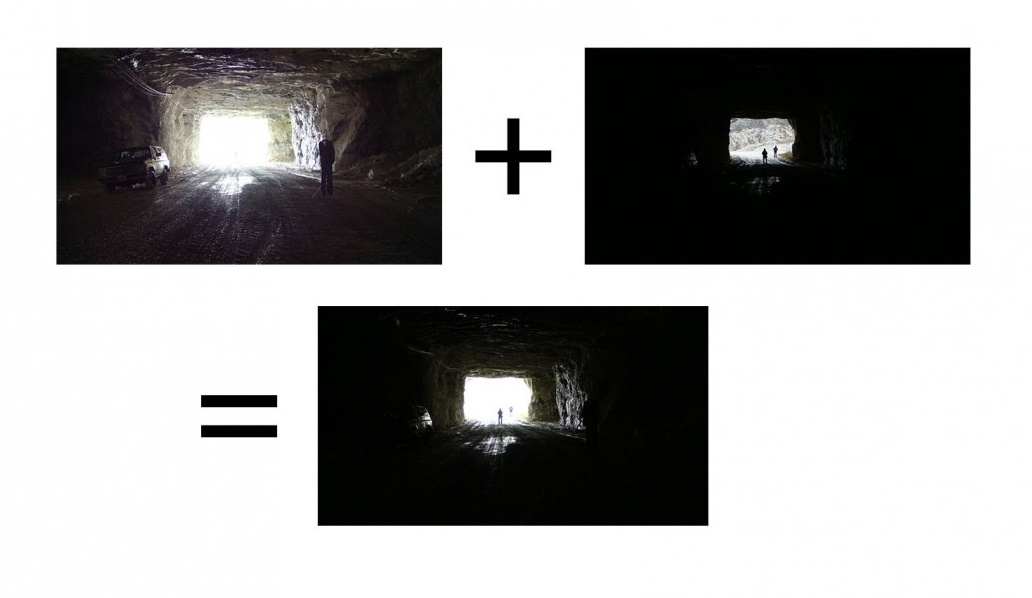

Closer to human perception (whatever that is)

Computer vision systems are getting remarkably good, but compared to the human vision system they’re pretty rudimentary. Part of why is the incredibly efficient and robust way that our eyes and brain can make sense of nearly any input: too dark, too bright, moving, distant, etc. The Army Research Lab wants to alleviate one particular shortcoming of how AI systems see: an inability to deal with extreme contrast.

Consider a drone flying through a forest: It needs to be able to quickly perceive and react to features that may be in deep shadow under the canopy of a tree, or in bright sunlight where there’s a break in cover. The orders-of-magnitude difference in light levels (also known as high dynamic range) makes it difficult for a camera to perceive both simultaneously or adjust quickly enough to switch between appropriate settings.

The study they did was actually on how the human brain reacts to abrupt shifts in illumination, and the results are probably not interesting to anyone not already familiar with the visual cortex and processing pathways. I selected it to highlight not the findings but the necessity of understanding nature when attempting to mimic it. Similar barriers are present across the board in AI research and emphasize the importance of basic research to advance the state of the art.

Another consideration is the speed with which we are able to recognize and categorize objects in motion. Sometimes it’s so fast you react before you know what’s happening, flinching when someone fakes you out because the perception of an object rapidly growing in your field of view is tied directly to a physiological response. Self-driving cars need to make similar judgments and reactions, but can fall short in many different ways — never recognizing something, or doing so too late, for instance.

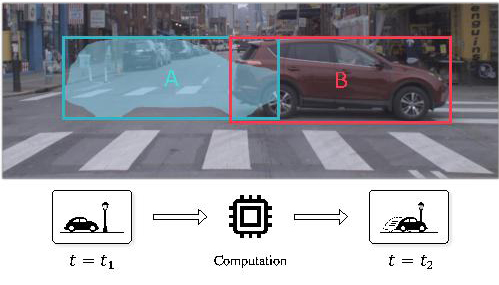

CMU researchers have proposed a new metric for how well an AI system does this, or how quickly at any rate. “Streaming perception accuracy,” as they call it, is basically just the time it takes for such a system to recognize an object or action. “By the time you’ve finished processing inputs from sensors, the world has already changed,” explained graduate student Mengtian Li, who formalized the measurement in a paper presented last month at ECCV.

It’s not some revolutionary idea, but it seems obvious in retrospect that something like this is useful, providing a continuous estimate of how far behind reality a perception system is. Knowing that can lead to higher-level improvements, like knowing when to redirect resources or cut corners to “catch up,” or making predictions about how long it will be before a decision on a given object can be rendered.

A mixed bag of engagement online

Our last study relates to social media and how scientific papers propagate on those platforms. Researchers also associated with UW (but unrelated to Whale Safe) took a look at the retweets of 1,800 preprint papers (i.e., not published in a journal but available online) that got a large amount of social engagement outside of the usual set of academics.

They found that they were capable of breaking down a paper’s level of interest among various communities, including “mental health advocates, dog lovers, video game developers, vegans, bitcoin investors, conspiracy theorists, journalists, religious groups and political constituencies.” Sometimes, no doubt, all of them at once!

But they made another, more unsavory discovery: “Surprisingly, we also found that 10% of the preprints analyzed have sizable (>5%) audience sectors that are associated with right-wing white nationalist communities. Although none of these preprints appear to intentionally espouse any right-wing extremist messages, cases exist in which extremist appropriation comprises more than 50% of the tweets referencing a given preprint.”

It’s a warning to scientists that while they can control the paper itself, they can’t control how it is used or misused. Care must be taken so that good science isn’t repurposed with bad intentions.

Comment