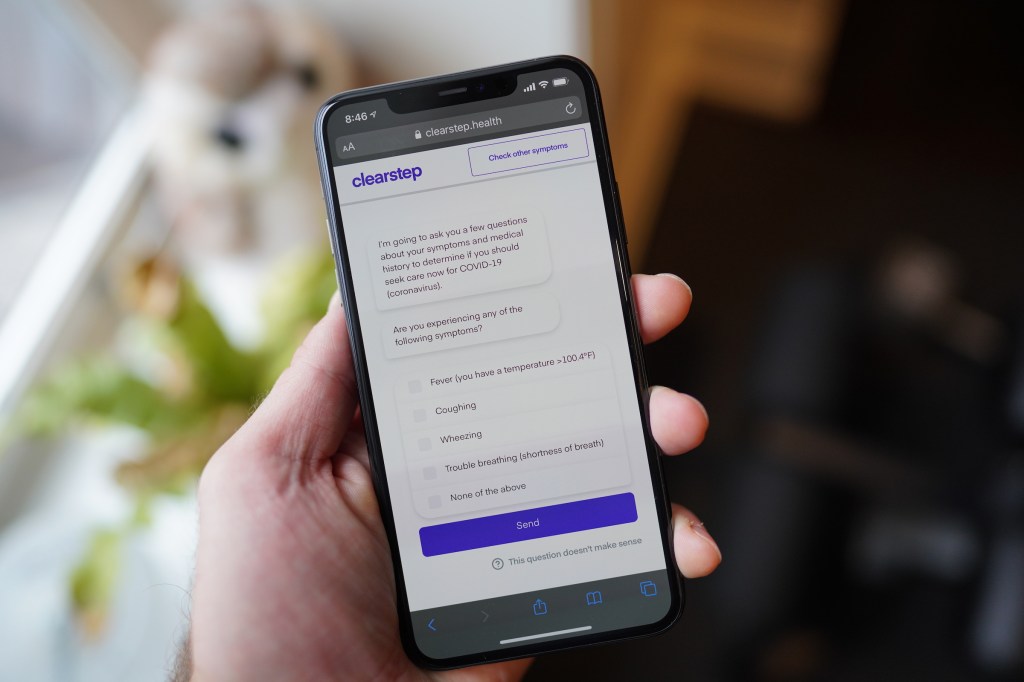

There are a growing number of symptom checker and screening tools that you can use at home if you suspect you might have contracted the new coronavirus that is causing the global COVID-19 pandemic. Most of these are relatively simple, including around three or four questions that basically cover the top reported symptoms experienced by anyone who has confirmed to have had the disease. Chatbot-based symptom checking software startup Clearstep has created its own COVID-19 screener, which goes more in-depth to combine symptom checking with screening for potential exposure to the virus.

The reason Clearstep’s tool is designed to go a step further than most is simple, according to co-founder and COO Bilal Naved – the symptoms reportedly suffered by those affected by COVID-19 include many that could indicate other serious conditions, including an impending heart attack. More effective and comprehensive screening can also help reduce the burden on an already heavily-taxed healthcare system, which seems likely to only get busier over time as the number of cases across the U.S. continues to climb.

Naved and cofounder Adeel Malik, both of whom have worked in health at Johns Hopkins University and been involved in a number of academic scientific publications, developed Clearstep as a front-line way to connect patients with the right care, using remote screening facilitated via chatbot on their desktop or mobile device. Clearstep’s aim fits naturally with one of the key needs in the ongoing coronavirus pandemic – effective screening that can provide individuals with clear and accurate guidance about what steps they need to take to seek care, and when.

“Our country is entering a time of a lot of uncertainty, but but also a time where there could be a true, critical threat to the integrity of the healthcare system,” Naved said in an interview. “If the rate of infection of this really reaches some projections, we might not have enough hospital beds or ICU beds to deal with all of this […] So it’s all about urgency and speed here and rapid response, but also being able to deliver the highest quality product. We are built off of nurse protocols, and we’re the only ones that have access to this in a publicly available chatbot format that has been used in over 200 million encounters in over 95% of the call centers around the country.”

Clearstep’s screener asks a range of questions about symptoms, travel, potential contact with anyone either diagnosed with COVID-19 or likely to have it based on their own travel and other factors. Once you go through the questions, which are presented in a fairly standard and easy-to-follow chat message format, the tool provides you an evaluation of what next steps you should take. It’ll provide you advice about whether or not you need testing for COVID-19 based on current CDC guidelines about who should be tested – and alert you about whether you should seek care for any other reason, independent of your potential coronavirus exposure.

The Clearstep team is also making sure to stay on top of new research as it emerges regarding the presentation and likelihood of symptoms in COVID-19 patients. Their approach focuses on data-driven representation of the symptoms that most people are likely to have, and then also taking the less likely presenting symptoms and building a model wherein those compound and add up to a total. The team is “keeping a pulse in the literature” published in peer-reviewed sources and adapting its screener as it needs to, as well, Naved says.

Ultimately, Naved thinks that where Clearstep can contribute is in its ability to integrate quickly with healthcare providers, providing a triage tool that can give frontline responders a way to interface with the public safely, while also helping to ensure that all the health issues that are not related to COVID-19 but that are still serious and require care don’t get left behind.

“We were able to go from first conversation to contract signed to configured and implemented in total of nine days,” Naved says about their speed of response. “The contracts took six days and in three days, we we customized, put in behind their branding, integrate itd and deployed it out to an entire population in Florida for a health system there […] The symptom checkers that are being put out there need to be able to integrate with those places that are seeing the massive influx of volume and may not be able to handle it, because that’s our responsibility right now.”

Comment