In an effort to further differentiate itself from rival Facebook, Snapchat this morning expanded its ad offerings lineup with three new products that take advantage of its already popular lenses and geofilters. The company is debuting a handful of new products, including World Lenses, Audience Lenses, and Smart Geofilters – representing the largest update to its Sponsored Creative Tools since the first branded Lens debuted in October 2015.

The company says that its creative tools are heavily used in its app, which makes them appealing to potential advertisers. Today, over 1 in 3 daily users play with Lenses every day on average, Snapchat notes, and snaps with Geofilters are viewed over 1 billion times a day on average.

The newly introduced World Lenses are an extension on Snapchat’s Sponsored Lens, which already let advertisers turn users’ selfies into ads. With the Sponsored World Lenses, advertisers can also create content for the rest of the photo beyond the face, including floating 2D or 3D objects, interactive content, and other items.

There are four types of World Lenses becoming available: those where you can place a 2D or 3D film character or product into the photo (for those photos snapped with the outward-facing camera); action-based lenses where you look at or tap an AR object to trigger something to happen – like a cloud character vomiting rainbows, for example; an “interaction” World Lens, like an in-app game; and environmental lenses which can be used to add floating lights or ambiance.

Not all the iterations of World Lenses available at launch. For example, Snapchat does not yet offer support for the recently launched 3D objects in its World Lenses ads, and didn’t offer an ETA on when these would be available.

According to Snapchat, doubling down on its Sponsored Lenses makes sense because these types of lenses consistently see high engagement times among end users – or, as Snapchat calls it, “play time.” The company says that Snapchatters today play with these Sponsored Lenses for over 15 seconds before sending them.

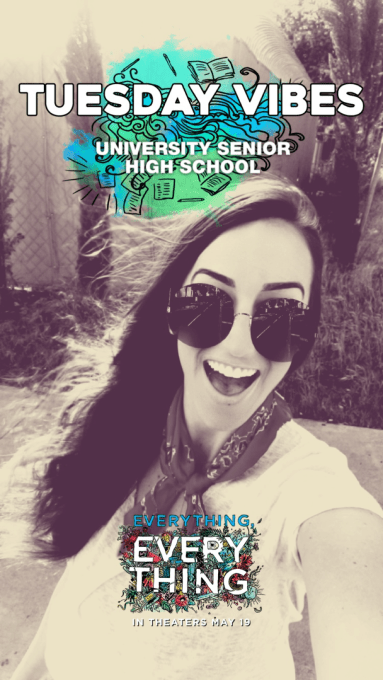

Warner Bros. will be the first advertiser to run a Sponsored World Lens on Snapchat with a new ad for the joint MGM and WB film “Everything, Everything.” Others who have plans to run World Lens ads in the future include Netflix, for its show “Glow;” plus Dunkin Donuts and Glidden Paint.

The World Lenses will be available in all markets where Lenses are sold, including the U.S., U.K., France, Germany, Canada, Australia, and elsewhere.

Additionally, Snapchat is rolling out Audience Lenses, which allow advertisers to buy regionally targeted Lenses for the first time. Before, advertisers could only be bought as nationwide takeovers, Snapchat says.

Audience Lenses let advertisers buy a guaranteed number of Lens impressions for a specific audience. This includes those that are targeted by demographics like age and gender, as well as those identified as falling in one of Snapchat’s “Lifestyle Categories” – a grouping that’s based on the user’s viewing habits in the Discover and Our Stories sections in the app.

Red Bull and MTV have already tested these Audience Lenses, and Lancome’s L’Oreal Paris will launch one soon.

The third new ad product is an updated version of Snapchat’s Geofilters. This ad type allows users to activate branded overlays that appear when they swipe left or right on the camera. Citing data from a survey it commissioned, Snapchat notes these Geofilters are already very popular with the Snapchat community, as 80 percent of users have used a Geofilter at a restaurant, 66 percent at a mall, and 50 percent at a gym, for example.

The new ad is called a Smart Geofilter, which will automatically add location information or other real-time information to a nationwide or chain Geofilter. For example, these could be updated automatically to include things like a high school or college name, airport name, state, city, neighborhood or zip code.

Warner Bros. again ran Smart Geofilter ads for its movie “Everything, Everything,” in order to target high school users by featuring the name of the school in its ad.

In addition to the rollout of new products, Snapchat says it has made other improvements to the way the ad business works – including sped up production timelines for Sponsored Lenses, which now only take six weeks instead of eight. It has also added quick-turn options that leverage its existing creative content from prior Lenses which only take one to three weeks.

Building out its interactive content helps Snapchat keep users engaged with its app, and launching it repeatedly – daily users launch it over 18 times per day, on average. Snapchat’s daily users now spend over 30 minutes on Snapchat each day on average, up from 25 to 30 minutes last quarter. More than 3 billion Snaps are created every day — up from more than 2.5 billion in Q3 2016. And over 60 percent of Snapchat’s daily users create content every day.

By extending the same set of engaging tools to advertisers, Snapchat hopes that advertisers see the value in the time users are spending with their ads, even if Snapchat doesn’t have Facebook’s reach.

Comment