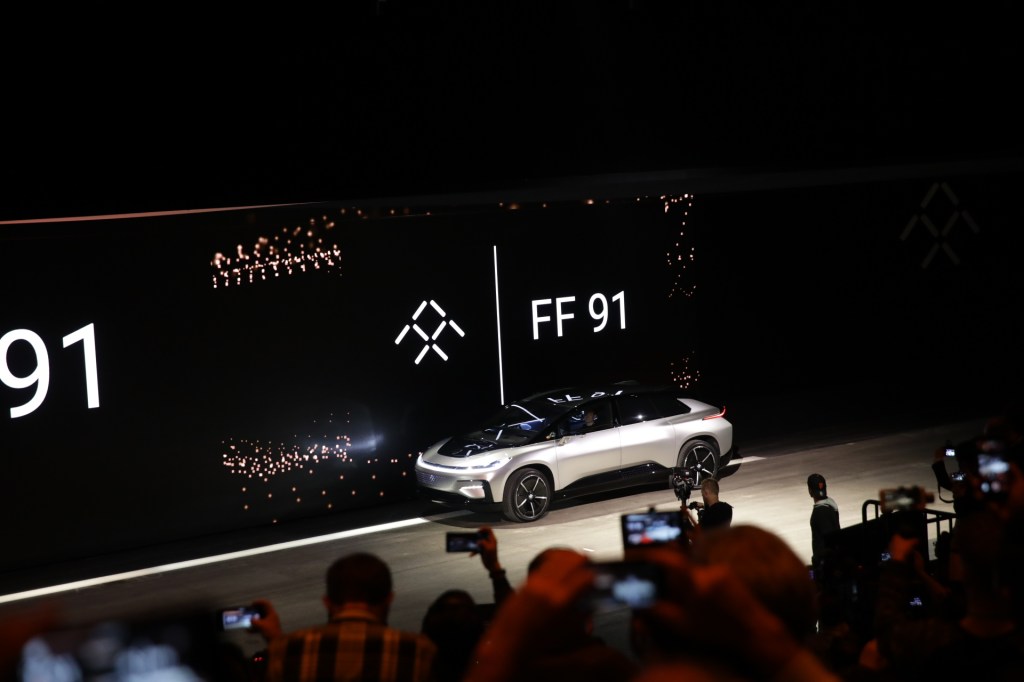

At a very big event in Las Vegas ahead of CES, Faraday Future showed off the FF 91 — its first production car — and show off a few of its features.

Faraday Future has been in the news a lot lately, but not necessarily for good reasons. In November, the company’s factory construction was paused and putting its targeted ship date at risk among a host of other issues. Faraday Future has thus far been very flashy, but has yet to get a product in the hands of consumers — and it needs to get there. But, at least part of the way is finally showing a production car, which Faraday Future tried to do with bravado at the event.

There were two big parts of the demonstration (thus far — we’re live-blogging it here): the performance of the car and its range. Throughout both parts of the presentation Faraday pitted the car against Tesla’s Model S and Model X. To be fair, those cars are essentially benchmarks for the electric car industry. And Tesla’s troubles getting enough cars on the road are evidence enough that it’s a very, very hard industry.

It also showed off some parts of its autonomous driving technology by showing a video of the car finding a parking spot and parking itself. Owners can send the car off to park itself and summon it through the smartphone application, and the car packs 30 sensors including cameras and Radar, Faraday Future director of self-driving Hong Bae said.

According to Faraday Future, the car is able to hit 60 mph in 2.39 seconds — which, if accurate, would narrowly beat out the 2.4 seconds it takes a Tesla Model S to hit 60 mph. It showed a video of the car beating the Model S in a race, and while the company had each car hit full acceleration on stage, they did not go head-to-head. So we’ll basically have to go by the video for now, until we actually see the cars in person race each other in an independent setting.

Car fast pic.twitter.com/xg4GJSqxYp

— Matthew Lynley (@mattlynley) January 4, 2017

The FF 91 also has a range, the company says, of 378 miles (EPA adjusted). The Model S has a range of 315 miles (also EPA adjusted), and the car goes further at a constant 55 miles per hour. That’s enough to get from Silicon Valley (launch point not specified) to Los Angeles “with miles to spare,” VP of propulsion engineering Peter Savagian said on stage.

Beyond that, the car has a few extra flourishes like a dual antennae that allows a wifi hotspot, facial recognition that allows for keyless entry and 151 cubic feet of space internally.

To get their hands on the car, users have to register and put down a $5,000 deposit. And it will ship in 2018, the company says. Whether it actually works will, of course, be a different story. On stage, the company tried to activate part of its self-driving parking technology, and it didn’t operate. To re-iterate, Faraday Future really needs this car to work beyond just a very big flashy event at CES.

Before we close, here are a few choice quotes from the event:

Faraday SVP of engineering Nick Sampson

“We’re gonna show the first of a new species.”

“Tomorrow is too important for us, and for humanity. We have to flip the automotive industry on its head… independent of fossil fuels.”

“We’re ready to take that final decisive charge into the future… Faraday Future intends to lead that charge.”

“There are companies that have shown great electric vehicles… but while these companies inspire us what they are doing is a slight progression of where we’ve been before.”

Director of self-driving Hong Bae

“A car that adapts to you, a car that can drive itself, a car that is smartest ever.”

“This intelligent entity is also a caring entity. It looks out for you, it protects you… You will grow to trust it.”

Anyway, the event’s all wrapped up so be sure to check out our live blog for a recap of the whole presentation. Also, Elon Musk has yet to tweet about the announcement or presentation. We’re keeping a close eye on that.

Comment