“What do I type?” is the big question making chatbots hard to use. So today Facebook Messenger is giving chatbot developers new “Quick Reply” buttons and persistent menu options to make their bots easier to navigate.

Messenger bots can also now send videos, audio, GIFs, and files so they can encompass wider range of use cases. People can now rate bots with one to five stars to teach developers how to improve, though there’s no word on the chatbot analytics Facebook has promised. And if customers opt in, developers will be able to connect these customers’ accounts to Messenger accounts to allow more seamless communication with them.

Messenger bots can also now send videos, audio, GIFs, and files so they can encompass wider range of use cases. People can now rate bots with one to five stars to teach developers how to improve, though there’s no word on the chatbot analytics Facebook has promised. And if customers opt in, developers will be able to connect these customers’ accounts to Messenger accounts to allow more seamless communication with them.

Together, these new features could make chatbots more inviting to the general public who might have been baffled before.

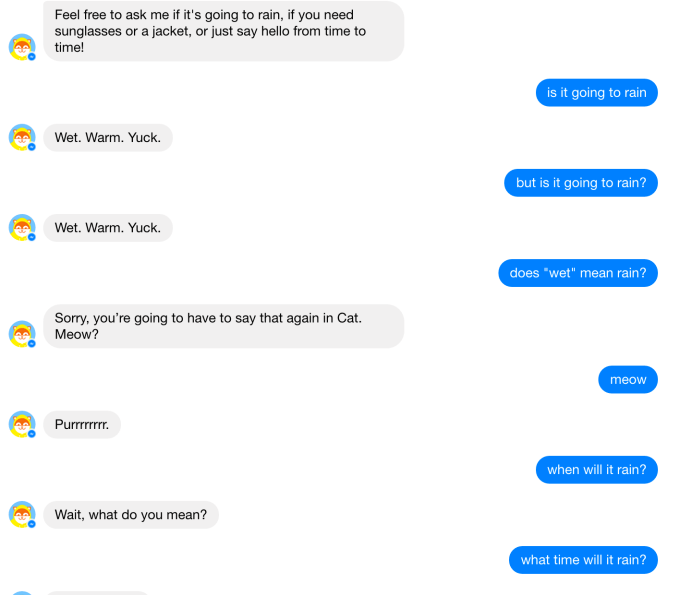

While lauded as the future of interfaces, few chatbots have seen widespread use. When Facebook launched its chatbot platform at its F8 conference, it seemed half-baked. People didn’t know what text commands triggered what functions in apps, leading the bots to misunderstand users’ replies. This in turn led people to quit using bots in frustration, and go back to traditional app and website interfaces.

11,000 bots have been built for Messenger since the bot platform launched twelve weeks ago. That’s after Facebook announced a seperate metric that 10,000-plus developers have built for the Messenger platform, which Facebook announced at TechCrunch Disrupt NY six weeks ago. Few bots have announced growth milestones, which you’d expect by now if they were in fact growing. [Correction: There are now 11,000 bots, not developers.]

You can watch Messenger’s head of product Stan Chudnovsky discuss the issues with bots here in our fireside chat from Disrupt NY.

Facebook Messenger bets on bots (full panel) #TCDisrupt https://t.co/CsueutuCbQ

— TechCrunch (@TechCrunch) May 10, 2016

Unfortunately, Facebook Messenger and its defective launch examples have soured some people on the whole concept of chatbots. But these new commands could rescue the chatbot platform from becoming the next Facebook Home. The new features are optional for developers to add, but they really should integrate them. Bot makers can find out how at Facebook’s developer blog and new Messenger blog.

With Quick Replies seen above, Messenger can surface examples of responses to a question posed by a bot. When asked your favorite movie genre, you’ll be able to tap Comedy or Action instead of fumbling with typing in commands like “Funny” or “War” that the bot might not understand.

With Quick Replies seen above, Messenger can surface examples of responses to a question posed by a bot. When asked your favorite movie genre, you’ll be able to tap Comedy or Action instead of fumbling with typing in commands like “Funny” or “War” that the bot might not understand.

Facebook’s head of Messenger David Marcus writes that Quick Replies “offer a more guided experience for people as they interact with your bot, which helps with expectation management.” Up to 10 buttons can be shown, and they’ll disappear from the chat history leaving only the selected button, which makes it much easier to read back through a conversation with a bot and figure out what happened.

For bots with a more hierarchical style where users might want to dig into a certain utility, then pop back out to the initial options, Facebook is offering the persistent menu seen here. Hidden within a three-line hamburger button at the bottom of the screen, it can be opened to reveal a high-level menu of commands like “Go Shopping”, “On Sale”, “Top Sellers”, and “Help”. Marcus believes these will assist with “re-engagement and consistency.”

Facebook is essentially marrying the conversational responsiveness and accessibility of chatbots with the familiarity and intuitive interface of apps you browse. To win messaging, Facebook may need bots. To offer bots, it needs developers. And to attract developers, it needs an audience. A hybrid of chat and buttons could make bots actually usable.

Perhaps the future doesn’t have to look quite so different from the past.

Comment