Livestreams start boring because broadcasters don’t want to begin the real action until more people have tuned in. That can take a few minutes, even with streams being rapidly distributed via push notifications, tweets and the News Feed.

But by that time, the initial audience may have bounced, and the recorded replay won’t entice viewers later. Facebook Live videos auto-play their first seconds in the News Feed, but no one wants to watch creators twiddle their thumbs saying “Hey, we’re just waiting for more people to join the broadcast.”

That’s why Facebook and Twitter should take cues from other forms of mass media and build a way to stoke interest and assemble viewers before a livestream starts.

Movies begin marketing many months or even years in advance. The promotional blitzes climax in the weeks before the film is even released. They want a big pop on opening day that convinces others it’s a zeitgeist moment. Even movie showings themselves start with previews of trailers when the film is scheduled to start. The studios want everyone ready in their seats, rapt with anticipation.

Concerts have openers. Sports matches have pre-game shows. And in tech, the big launch events from Apple and Google post their streams well before the CEO takes the stage.

Mobile livestreaming apps need a waiting room.

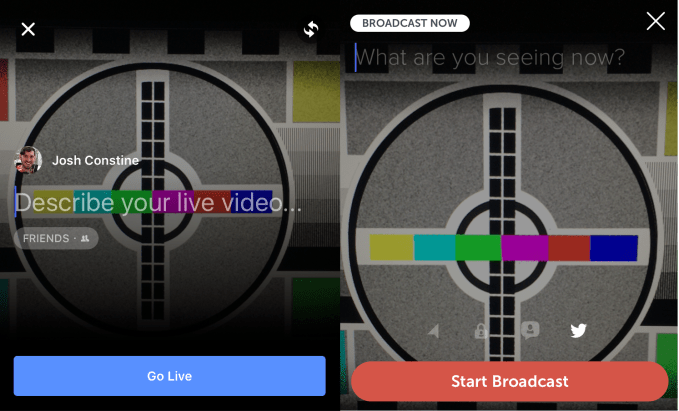

Imagine an alternative to immediately starting your Facebook Live or Periscope broadcast. You’d get a link that you could freely distribute, when typically it’s awkward or impossible to post your stream to other social networks when you’re on camera already. You could potentially set an estimated start time, or wait to begin the broadcast whenever you’re ready. There could even be an option to schedule a stream further in advance, though the platforms and people lining up to watch might worry the broadcaster would flake out.

People who click the link before the stream starts would be dropped into a waiting room. There they’d see a countdown to the estimated start time or a message telling them to hang tight. They could opt to receive a notification when the stream actually begins so they can do something else until then. They’d have the ability to share the stream elsewhere to help it go viral.

People who click the link before the stream starts would be dropped into a waiting room. There they’d see a countdown to the estimated start time or a message telling them to hang tight. They could opt to receive a notification when the stream actually begins so they can do something else until then. They’d have the ability to share the stream elsewhere to help it go viral.

To keep people entertained while they wait, Facebook or Periscope could show previous videos or posts from the creator, or other content they think would be relevant. The viewers could also be allowed to chat with each other, or add comments and questions ahead of time so broadcasters have feedback or queries waiting for them when they start the stream. This is similar to how Reddit Ask Me Anything viewers can write their questions before the AMA starts.

When the stream begins, broadcasters will be able to immediately jump into the subject matter because they’d already have an audience. That will make the first few seconds seem more dynamic when people stumble across the livestream or replay in their feeds.

Facebook is already trying to tackle the issue of long livestream replays seeming boring by showing an engagement graph timeline so viewers can skip to the most popular parts of the video.

But by letting creators assemble their fans or friends ahead of time, the video themselves will rev up faster and receive more real-time feedback that makes them feel urgent and lively. The waiting room could convince celebrities that streaming is worth their time, and make amateurs more confident that someone actually wants to see what they transmit. To maximize the potential of the unpredictable mobile livestreaming format, Facebook and Twitter might need to let broadcasters plan a little.

Comment