Facebook’s newest feature could fundamentally change how you watch video. Until now, you either sat through a video until it got too boring, waited for the interesting part or fast-forwarded hoping to spy something worth seeing. But for clips that weren’t immediately exciting, especially monologues or selfie-streams where the action was in the audio, it was tough to tell if a video deserved your time.

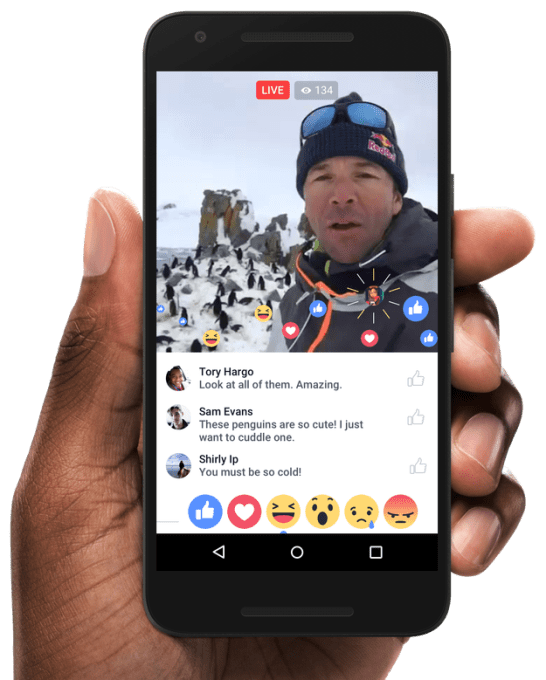

Yet Facebook knows when the good part of a Live video is coming. When entertained or riled up, viewers can fire off Live comments and reaction emojis during the stream that the broadcaster can see, similar to Facebook Live competitor Periscope’s hearts.

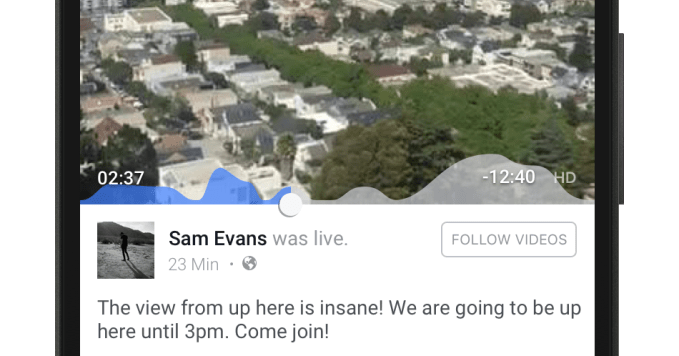

Now Facebook tells me it’s putting reactions to work to power a visualized timeline of when a Live video receives the most engagement. When you go to fast-forward through the recorded replay of a Live clip, you’ll see the graph of reaction volume overlaid on the progress bar.

Essentially, you’ll be able to see when the video gets interesting and skip there if you want.

That could influence how videos are shot and paced, and make amateur streams more compelling, but also encourage anxious skipping around that breaks a video’s narrative.

Attention defacebook disorder

“Around two-thirds of the watch time for Facebook Live happens when the video is no longer live, which tells us that people are interested in watching live videos even if they can’t catch them while they’re happening,” Facebook’s head of video Fidji Simo tells me. That might be why Periscope just launched its #Save feature that mimics Facebook, so its users can finally show off replays permanently instead of only for 24 hours before they’re deleted.

Simo notes that “When people watch a live video after the fact, the engagement graph provides a valuable signal that can help people explore the video and easily identify highlights that they may find engaging, which could encourage people to spend more time with a video that they might have otherwise skipped over.”

Facebook says the engagement graph is rolling out to some users now. As shown at the top of this article, users will see blue peaks and valleys representing high and low volumes of engagement so they can easily scrub to the best scenes.

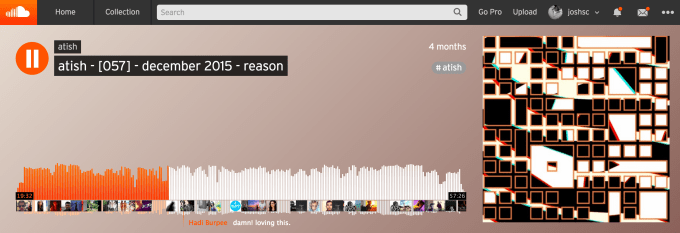

It’s a bold experiment in content consumption. A distant relative might be how SoundCloud visualizes both a song’s sound wave so you can find the big bass drop, and shows timed comments pegged to certain moments of a song. Simo explains that “The engagement graph is designed to help people easily navigate a video that was live — especially longer ones — to find the moments that drew the most engagement.”

Facebook also revealed that it’s starting to show Live video reaction replays that appear in sync on recorded versions of broadcasts so it feels like you’re watching in real time. You’ll see the emojis for Likes, Hahas, Sads, and Angrys plus the faces of friends who left them overlaid on the video.

The enabling of impatience could have a profound impact on how people create and consume Live video, or all video if Facebook expands the feature there. For now, it’s rolling out to some users on Live video replays only.

As for coming to traditional videos, Simo says there are “No plans to share. We think the engagement graph is particularly useful for live video, as they are often longer than typical short-form, on-demand videos, and can help people easily discover the parts of the video they might find most interesting.”

The announcement comes as Facebook continues to rapidly push advances to its Live video platform. It now allows Continuous Live Video feeds like nature cams, as well as geo- and age-gating to make streams visible only to certain people.

“We only makin’ the highlights”

The engagement graph feature could make creators feel more comfortable filming slow build-ups to big climaxes, because viewers can peek to see that something special is coming up and zip there if they’re antsy. Primetime TV shows have to be scripted with mid-episode cliffhangers to keep audiences glued in through the commercial breaks. Similarly, the engagement graph could push broadcasters to pepper their streams with moments of delight.

It could help amateur livestreamers get friends to at least watch the highlights of the streams even if the first few seconds that auto-play in the News Feed look drab. That’s Simo’s theory. And if users are less worried about boring their friends to death, they might be more confident about hopping in front of the camera.

“Nothing beats watching a live video while it’s happening, but it’s not always possible to catch-all broadcasts live,” Simo shared. “While we can’t totally replicate the experience of watching live, we want to help people feel ‘in’ on the action after the fact.”

![]() At the same time, viewers might quickly bounce from broadcasts that don’t show an upcoming spike in engagement. Clips could see a sudden exodus if they drag on past their brightest moment. Any sense of coherent, linear storytelling might be fractured by itchy trigger fingers. The ability to preview the future entertainment value of a video could make the format more utilitarian and less like art if viewers merely opt for the visual crib notes.

At the same time, viewers might quickly bounce from broadcasts that don’t show an upcoming spike in engagement. Clips could see a sudden exodus if they drag on past their brightest moment. Any sense of coherent, linear storytelling might be fractured by itchy trigger fingers. The ability to preview the future entertainment value of a video could make the format more utilitarian and less like art if viewers merely opt for the visual crib notes.

The mobile live streaming medium is so new that norms are still emerging. We’ll have to wait and see how engagement graphs impact Facebook Live.

Facebook built the News Feed itself to help us skip to the good parts of our social graph’s collective experience. As the democratization of video creation tools leads to an explosion of the quantity of content produced, we’ll need ways to sort through it, too.

Comment