At Google’s I/O conference today, Google’s Advanced Technology and Projects (ATAP) research unit offered an update on its interactive textiles project unveiled last year, Project Jacquard. ATAP’s Ivan Poupyrev announced that the company was collaborating with iconic clothing company, Levi’s, to launch a “connected” smart jacket aimed at urban cyclists that will allow wearers to do things like control their music, answer phone calls, access navigation and more, all by tapping and swiping on the jacket’s sleeve.

Google’s partnership with Levi’s was first announced last year, but the two companies hadn’t yet disclosed how the clothing maker would implement Project Jacquard’s technology.

In case you missed it previously, this project involves weaving multi-touch sensors into clothes in order to make what you’re wearing the new…well…”wearable” computing device.

The idea with this new Levi’s Commuter jacket, explained the company, is to make something that’s both fashionable to wear while also representing a practical implementation of the technology.

Today, cyclists often have to fuss with their phone while commuting on busy streets, which is dangerous.

With Levi’s Commuter jacket, they’ll instead be able to just touch their jacket’s cuff, using gestures to control various functions they would otherwise need to pull out their phone to do.

The jacket will be a part of Levi’s Commuter collection of clothing, which is largely aimed at urban dwellers who ride bikes to navigate their city.

A Jacquard tag is embedded in the jacket’s sleeve, making this functionality possible, and it can be pulled out and charged via USB. This tag connects with the LED, haptics, battery and the woven sensor in the garment. The connection points for the tech cleverly takes advantage of the jacket’s button-hole to look less obtrusive.

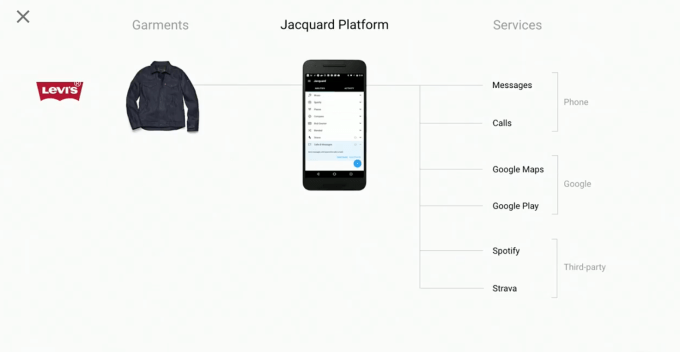

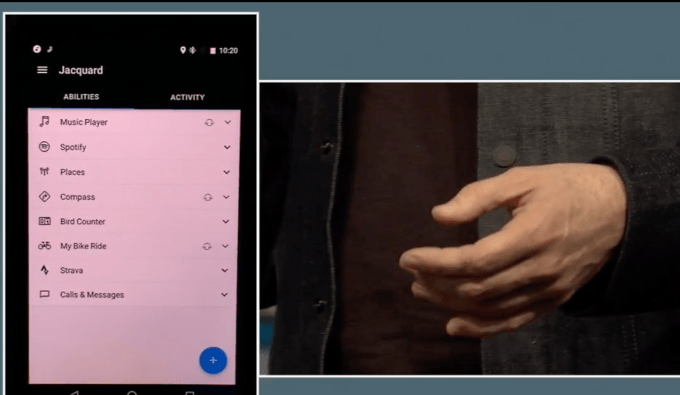

In addition, the platform includes a mobile application that connects your smart clothes to the cloud. Here is where consumers will control the apps that work with the connected garment.

Plus, the company stressed, you can use the Levi’s jacket like any other article of clothing — wad it up, throw it in the wash, etc.

“There’s a unique challenge in creating a smart clothes platform — fashion and technology have to work as one but there’s inherent tension between the two,” said Poupyrev. “Technology is fragile, garments… are not.”

In addition to controlling native phone functions like calls, as well as Google Maps and Google Play Music, Google says the jackets will interoperate with third-party services. That means you’ll be able to use the touches to control your Spotify music, for example, or a connected fitness app, like Strava. APIs will also be made available.

During a demo on stage at the event, the companies showed off how the jacket worked.

For instance, running fingers up and down the cuff controlled the music volume. Another feature, “Compass,” was accessed with a swipe. After doing so, a voice assistant informed the wearer, Levi’s VP of Innovation Paul Dillinger, of their next meeting time and how long it would take to arrive.

While the demo went off well, you could see there was a slight stiffness on the cuff where the sensors were woven in, and a bit of a bulge. It’s unclear how comfortable that will be — or how attractive.

Google says it plans to work with other apparel makers in the future to expand Jacquard’s reach, including athletic clothing companies and those who design business wear. (Though not mentioned out loud, Cinta’s logo briefly appeared on one slide during the presentation during the partners discussion.)

Perhaps of most interest is that this jacket isn’t some far-off pipe dream, as it turns out — it will “launch” into beta testing this fall, then become publicly available in spring 2017, says Google.

Pricing info was not offered.

Comment