Colin O’Donnell

More posts from Colin O’Donnell

Our cities face a choice: find creative ways to integrate today’s technology into their DNA or have entire systems get left behind, widening the equality gulf for people who rely on them. Urban populations are growing, infrastructure is aging and needs are evolving (access to the Internet is as important as electricity), but the foundations on which cities rest are set in stone. To keep up with the demands of modern urban populations, cities need ways to integrate new solutions into old ways of doing things.

Starting from scratch isn’t the only way to build a smart city. We can turn today’s legacy cities into successful smart cities by incorporating technology and innovation in layers, creating a 21st century experience overlaid on a 20th century infrastructure. Upgrades don’t have to come in the form of $100 million fixes. Bit by bit, cities are becoming smart by the application of consumer technology to the urban space. It’s not a question of can we cover the city in Wi-Fi? — it already is.

The question is authentication across the networks that are already there. The challenge isn’t covering the city in sensors; it’s already bristling with cameras and motion detectors. The challenge is creating the incentives for people to collect and share data in responsible ways.

The promise of building a smart city from the ground up — a greenfield utopia that would give technologists and urbanists the opportunity to design future-proof, technologically native systems and infrastructure — is intriguing, but what about legacy cities that have trillions of dollars in fixed infrastructure like streets, light poles, transit systems and garbage trucks? How do these old systems stay relevant as unstoppable technology continues to march on?

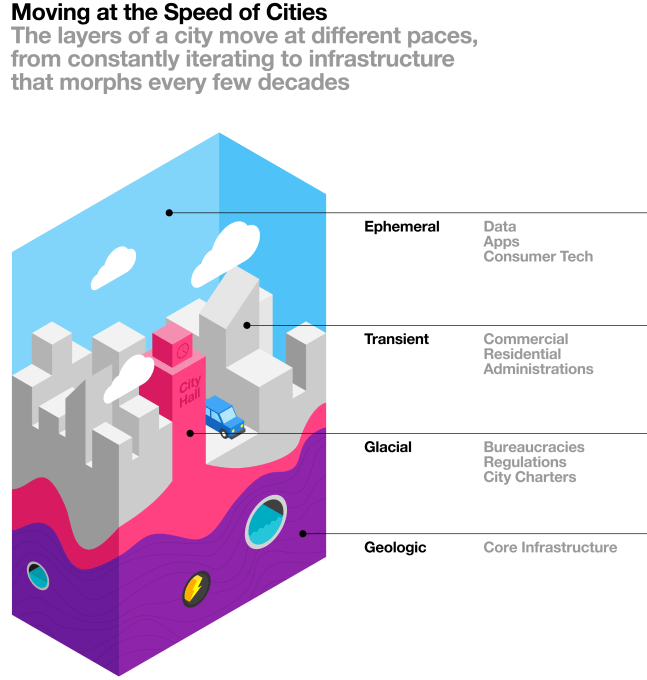

While city foundations don’t change much, we can alter, update and leverage the ephemeral and transient layers of cities (things like mobile technology, beacons and smart data applications) to keep the more static layers (street grids, water systems) relevant and user-friendly.

Technologies built on top of these massive systems have the advantage of serving a built-in, wider audience and tie into daily life in a way that no standalone mobile app ever could. Sixty million visitors on New York subways every year could certainly solve the empty room problem for a transportation startup.

Cities need to get with the program. Regulations and planning aimed at 20th century problems are holding back new digital-enabled services — electric bikes, drones, alternative transportation and gig economy job growth. Instead, cities should figure out what they need to do to make these new services safe, how they can support them and, in return, benefit from their success. For example, in LA, they’re talking about charging hotel tax on Airbnb to raise funds to help pay for affordable housing.

If we don’t get it right, cities risk getting ripped apart with individual layers isolated from one another. If we move too fast without city buy-in, entrepreneurs risk slamming into regulatory walls that force wasted energy and capital on legal battles and political dramas. And if cities move too slow, startups will simply find a way to work around them, leaving transit systems, power grids and highways to moulder.

Rather than throwing existing systems to the wayside, by layering on today’s mobile, sensing and data technologies to existing infrastructure, we can keep our infrastructure current. By enhancing function and usability as we go, we can keep these dinosaurs relevant in the digital age.

At the center of these upgrades, of course, are privacy, user protection and equity. Any city-related solution will have to put user safety and experience at the forefront, ensuring any data collected is subject to strong access and user controls, and that services and experiences are opt-in while serving the widest audience possible. Both cities and private industry must be on the hook for this.

Finally, for startups and cities to work together to make all of this happen, there needs to be an understanding of the speed of cities. A city is a complex web of systems with layers that move at radically different paces.

On one end is the ephemeral: consumer technology, data and mobile apps that are constantly changing. Thanks to this technology, urban innovation is happening at lightening speed between agencies, with city vendors, amongst new startups taking over entire industries and especially among consumers and small businesses with products like Nest and Placemeter. Not to mention what’s happening with the social and commercial aspects of cities: Facebook, Instagram, Apple, and Android Pay all have a major role to play in making cities more responsive, effective and, yes, smart.

Meanwhile, on the other end of the spectrum, traditional city infrastructure moves at a glacial pace. The conduits that carry the broadband lifeblood of New York City were built more than a century ago. Garbage trucks and subway cars have a useful life of more than 30 years, meaning a garbage truck bought in 2016 will be on the streets in 2046 — we will have the singularity co-existing with a massive, diesel-breathing, human-driven steel behemoth!

The trick is to not get bogged down in the many slow moving layers of the city, but build on top of the legacy infrastructure, taking advantage of the built-in investments and amazing scale. The greatest successes will rest on the efficient mixing of old and new, fast and slow, nimble and titanic. It’s up to technologists and entrepreneurs to learn the dance, and cities to let them in.

Comment