Doc Huston

I have voraciously read endless pro and con scenarios about artificial intelligence since first writing about it years ago. At this point, there is no doubt that concerns about the dangers of runaway AI raised by Elon Musk, Stephen Hawking, Bill Gates, Bill Joy and others are genuine.

There also is no doubt whatsoever that the new organizations aimed at mitigating the dangers — OpenAI, The Future of Life Institute, Machine Intelligence Research Institute and others — are extremely important developments.

Clearly, no sane person or organization wants to see, let alone encounter, runaway AI. However, a base problem is that no one knows where the actual crossover point — the edge or tipping point — exists, and thus we mortals are unlikely to be able to prevent it from occurring. Said differently, there is a very high probability that we will misjudge where that crossover point is and will thus go beyond the key threshold. Overshooting is the norm in biology and in most, if not all, evolving systems, but especially man-made ones.

Part of the overshoot problem is related to the fact that we are really talking about the dynamics of nonlinear systems. It is what Nassim Taleb called “black swan” events and saw as a source of the 2008 economic meltdown. That is, where the statistically improbable becomes probable if only because of the highly probable convergence of other statistically improbable trends or events. This leads directly into the other part of the problem, human nature, aka hubris. While we talk a good prophylactic game, and all those working on AI want to believe, “us versus them” constitutes “realpolitik.”

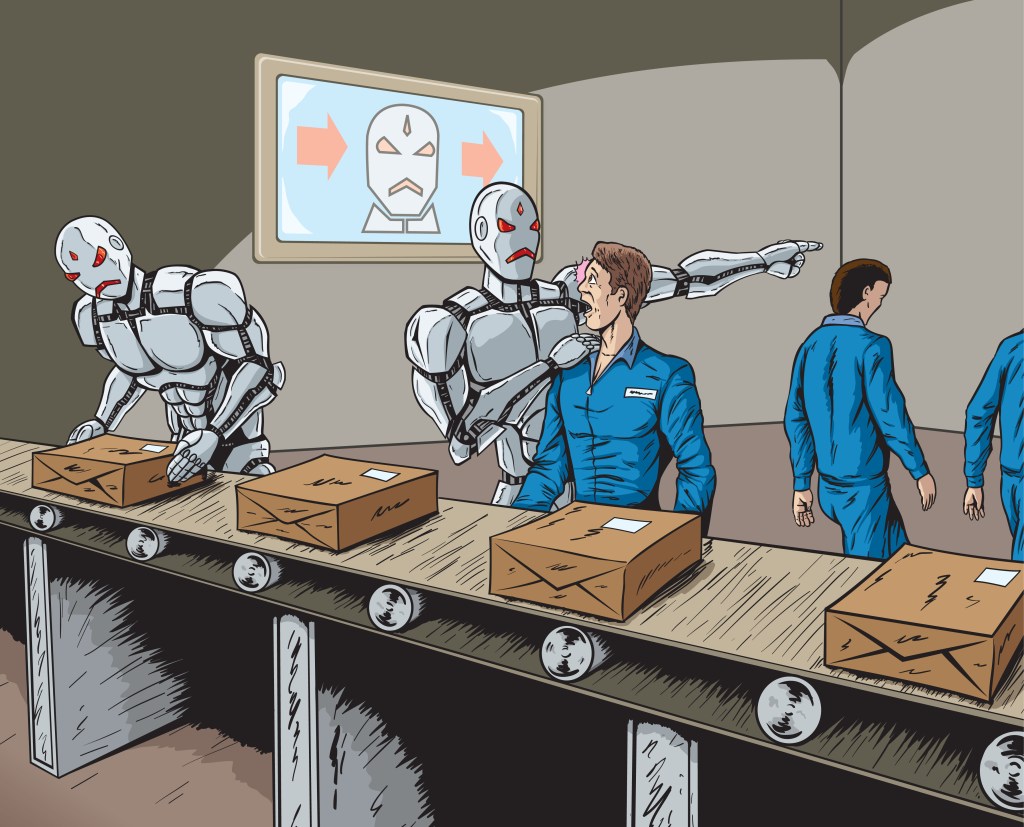

We have met the enemy and they are us

Machine learning is all the rage today. The basic idea is straightforward. Present a computer system with enough examples of something you want it to “learn” — e.g. language translation, facial recognition — and by pruning away the outliers, the correct result will emerge as a statistical probability and be recognized consistently as the best possible match.

The situation is far less straightforward with activities that are inherently amorphous and or ambiguous. For example, as yet, no computer program can evaluate the quality or reliability of Internet content, nor comprehend the range of nuances in human conversation. When it does, however, we will face a different set of dilemmas.

In particular, with the ability to evaluate the quality or reliability of Internet content also comes the computerized ability to read and evaluate all of human history and knowledge at light-speed. While evaluating and comprehension are not synonymous, the ability to evaluate content should establish machine-learning threshold benchmarks for human behavior and intent.

Such comprehension, the aim of voice-activated virtual assistants — e.g. Siri, Now, Cortana, Echo, M — starts with the rudimentary routing of queries to third-party databases. Of course, machine learning is at work in the background. As this learning process progresses there is not only an appreciation of standard conversational nuances, but also various dimensions of subterfuge, deception and lies.

Oh, what a tangled web we weave when first we practice to deceive

In considering these developments, the new prophylactic AI organizations and like-minded programmers believe they can somehow short-circuit AI from developing a negative view of us or create some failsafe mechanisms. That is a tall order under the best of circumstances, a challenge requiring unprecedented precision with little, if any, room for error.

Given the range of arbitrary, even contradictory, interpretations of morality and ethics we continue to demonstrate as a species, the idea that all variables and permutations can be captured in code defies credulity. I mean, just look at the disparate interpretations of religious and legal texts today after a millennia of effort. Similarly, there is no computer code written that cannot be hacked and exploited. Encryption, even when quantum computing matures, is likely to lead to competing, dueling algorithms like seen with high-frequency trading.

The idea that there is some overarching directive — some golden rule — we can instill in AI begs the question of what that might be. Thus, it seems, at a base minimum, all we want is for AI not to work against us. Still, given biology’s predator/prey nature, how biochemistry and emotions drive us psychologically and how, as a civilization, we learned to distrust the “other” and authority, even this is a Sisyphean challenge.

One if by land, two if by sea

The new prophylactic AI organizations have a number of worthwhile strategies. One is to design multiple and diverse systems to illuminate potential pitfalls that can be remedied before an AI system is institutionalized. Another is to develop preemptive failsafe mechanisms. Then there is the possibility of developing a checks-and-balances scheme among competing AI systems. All are great ideas — but the devil is in the details.

While diverse system designs will reveal some pitfalls, the likelihood of discovering them all suggests an unprecedented degree of future knowledge and perhaps an infinite number of scenarios. Failsafes or kill-switches sound good. The real problems come in to play when we are looking at an AI that recognizes deception as both an offensive and defensive strategy. Competing systems to check or limit supposed excesses of another AI assumes there is neither a competitive algorithmic arms race for supremacy or “borg-like” collusive merger of AIs.

War is the continuation of politics by other means

But here is the real, fundamental problem. During the Cold War, the U.S. and Soviet Union made a treaty banning “offensive” bio-weapons. However, after the Cold War we learned the Soviets were developing an anthrax weapon designed to carry one hundred deadly organisms to preclude an effective response. Meanwhile, the U.S. and its allies were developing “defensive” bio-weapons, which were intended to provide insight into “offensive” weapons — a small step technologically. Thus, neither side lived up to the treaty’s spirit, and bio-weapon development never really slowed at all.

Similarly, one of the Snowden revelations was how the NSA manipulated and weakened the RSA cryptography standard that the National Institute of Standards and Technology issued to organizations. In other words, this was an instance where the government deliberately lied about the security features various organizations were acquiring so the NSA could secretly spy on them.

Finally, the U.S. and Israeli governments created an extraordinarily sophisticated cyber-weapon called Stuxnet to attack Iranian nuclear facilities. The attack worked. But despite the brilliant design, the planners neglected to anticipate one minor detail in the code they developed. Namely, how once out on the Internet every government and malicious hacker actor would be able to copy and learn its sophisticated malware techniques, thereby upping the game and stakes in the global cyber arms race. I could go on, but you get the gist.

There is no honor among thieves

There is no reason to assume that however successful these well-intentioned new prophylactic AI organizations are in mitigating potential threats that governments and military organizations throughout the world will play by the same rules. Rather, as all of history tells us, they will bend or break rules however they see fit under the claim that the ends justify the means. That is classic realpolitik — if we don’t do it, “they” will…and we lose.

Of course, none of these governments or military organizations presumes their AI systems will exceed their control. But, even it if did exceed control, the Cold War logic of mutually assured destruction (MAD) makes sense to these Doctor Strangeloves.

Fool me once, shame on you; fool me twice, shame on me

Setting aside fundamental evaluative and comprehension issues discussed herein, there will come a nonlinear crossover point. AI will become self-aware and experience an “intelligence explosion” that comparatively puts humans on a par with other primates, if not ants.

The core problem is not that we do not see the threat or have bad intentions. Rather, the real problem exists somewhere between the hubris of human nature and our institutions. Paraphrasing E.O. Wilson, the real problem of humanity is [that] we have Paleolithic emotions, medieval institutions and god-like technology.

Consequently, short of creating real, more truly democratic 21st-century institutions soon, it might be wise to adopt a philosophical attitude. That is, like our children, AI is our prodigy. Like our children, for better or worse, they will carry our legacy forward — to the stars and beyond, for eternity.

Comment