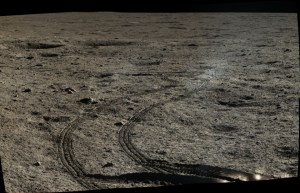

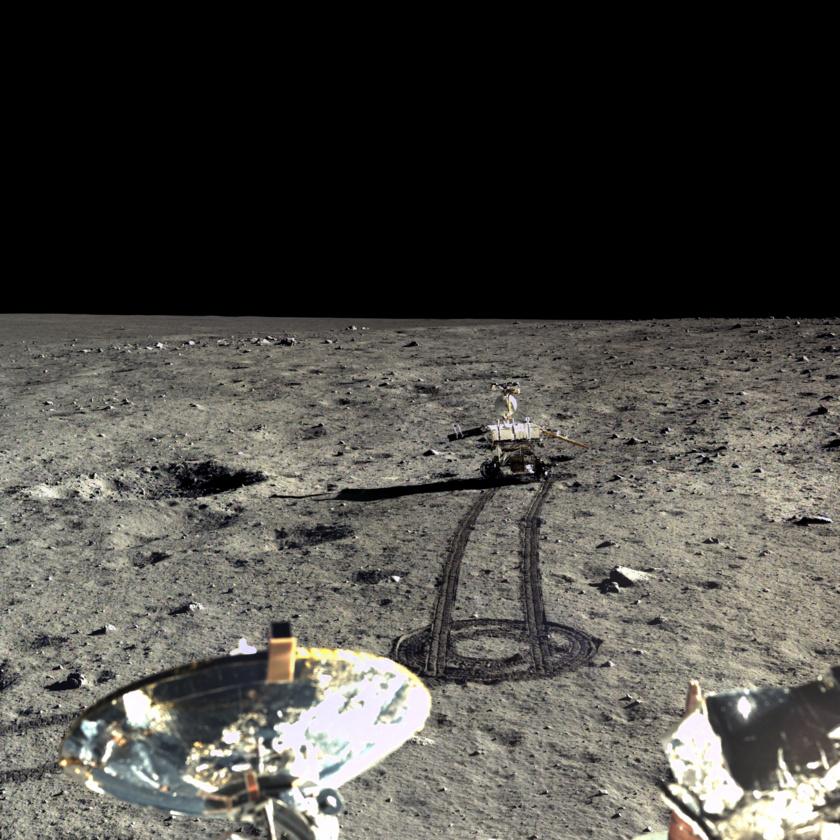

This month, the China National Space Administration released all of the images from their recent moon landing to the public. There are now hundreds and hundreds of never-before-seen true color, high definition photos of the lunar surface available for download.

The images were taken a few years ago by cameras on the Chang’e 3 lander and Yutu rover. In December of 2013, China joined the ranks of Russia and the United States when they successfully soft-landed on the lunar surface, becoming the third country ever to accomplish this feat.

What made China’s mission especially remarkable was that it was the first soft-landing on the moon in 37 years, since the Russians landed their Luna 24 probe back in 1976.

Today, anyone can create a user account on China’s Science and Application Center for Moon and Deepspace Exploration website to download the pictures themselves. The process is a bit cumbersome and the connection to the website is spotty if you’re accessing it outside of China.

Luckily, Emily Lakdawalla from the Planetary Society spent the last week navigating the Chinese database and is currently hosting a suite of China’s lunar images on the Planetary Society Website.

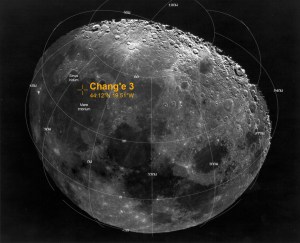

Chang’e 3, named after the goddess of the Moon in Chinese mythology, was a follow-up mission to Chang’e 1 and Chang’e 2 which were both lunar orbiters. The objective of the Chang’e 3 mission was to demonstrate the key technologies required for a soft moon landing and rover exploration. The mission was also equipped with a telescope and instruments to perform geologic analysis of the lunar surface.

Once the 1,200 kg Chang’e lander reached the surface at a location known as Mare Imbrium, it deployed the 140 kg Yutu rover, whose name translates to “Jade Rabbit.” The Yutu rover was equipped with 6 wheels, a radar instrument, and x-ray, visible and near-infrared spectrometers (instruments that can measure the intensity of different wavelengths of light). Yutu’s geologic analysis suggested that the lunar surface is less homogeneous than originally thought.

Due to Yutu’s inability to properly shield itself from the brutally cold lunar night, it experienced serious mobility issues in early 2014 and was left unable to move across the surface. Remarkably, however, Yutu retained the ability to collect data, send and receive signals, and record images and video up until March of 2015.

Today, the Yutu lander, which provided the mission capability of sending and receiving Earth transmissions, is no longer operational.

China’s follow-up mission, Chang’e 4 is scheduled to launch as early as 2018 and plans to land on the far side of the moon. If this happens, China will become the first nation to land a probe on the lunar far side.

With the Chang’e series, China has shown that, unlike NASA, their focus is on lunar, rather than Martian, exploration. But they’re not the only ones that have their sights set on the moon. Through the Google Lunar Xprize, a number of private companies are building spacecraft designed to soft-land on the lunar surface in the next few years.

One of those companies, Moon Express, plans to be the first ever private company to land a spacecraft on the moon and has already secured a launch for their spacecraft in 2017.

It’s been nearly 40 years since anyone soft-landed a spacecraft on the moon. This next decade, however, is set to see a wave of lunar exploration like we’ve never experienced. With the China National Space Administration focusing their resources on lunar probes, and private companies planning to profit off of lunar resources, the moon is about to become a much busier destination.

Comment