Outside big events like concerts, tons of Ubers can be waiting, making it tough for passengers to figure out which car is theirs. That’s why last week I suggested Uber create a feature that would give passengers and drivers a visual signal they could use to find each other. Well, today Uber launched that.

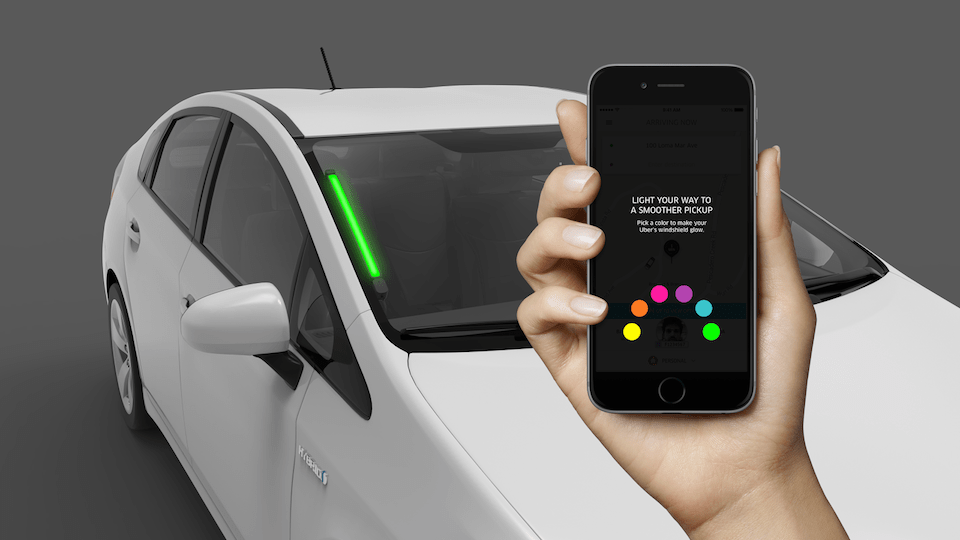

It’s called SPOT and involves drivers installing a colored LED light on the windshield. While the passenger is waiting to be picked up, they can tap a colored button (yellow, orange, pink, purple, blue, or green), and then look for the Uber with the matching colored SPOT light. Passengers can even hold down on one of the buttons to turn their phone that color and wave it in the air to help their driver find them.

This way, passengers won’t accidentally open the door of the wrong Uber, or even a non-Uber civilian’s car, which I can attest is quite awkward. Previously, passengers had to rely on Uber’s sometimes-laggy map, generic car types, and hard-to-read license plates.

The feature goes into testing in Seattle, and if it’s popular and helps Uber, it could be rolled out to more markets.

The big problem here is that Uber has failed to get drivers to uniformly adopt any additional technology beyond their self-provided car. They do have the Uber windshield sticker. But many drivers don’t even carry charging cords for iPhones and Androids, and few have got the auxiliary cord to run Uber’s Spotify integration. It would be tough, and potentially somewhat expensive, for Uber to distribute the SPOT devices and train drivers to consistently use them.

SPOT is just one part of Uber chasing “The Perfect Ride” as I described last week. Essentially, Uber is trying to shave seconds off every part of the ride, from the hail to the drop off, using features like suggested pickup points, back-to-back rides, driver destinations, and its mapping cars.

One more suggestion for getting closer to that perfect ride would be for Uber to better understand how long it typically takes passengers to come outside. If they’re typically a few minutes late to come outside after their Uber arrives, Uber could notify them while the driver is further away than someone who is usually there as soon as alerted.

Here with SPOT, if Uber can get passengers into their cars faster, drivers burn less gas waiting and can finish their ride sooner and pickup someone else. That means Uber makes more money, and drivers take away more money per hour driving so they’re more likely to stay onboard. Meanwhile, the passenger gets to their destination faster, boosting satisfaction and user retention for Uber.

Comment