As Google retools its Glass experiment, researchers at Stanford are using the device to help autistic children recognize and classify emotions.

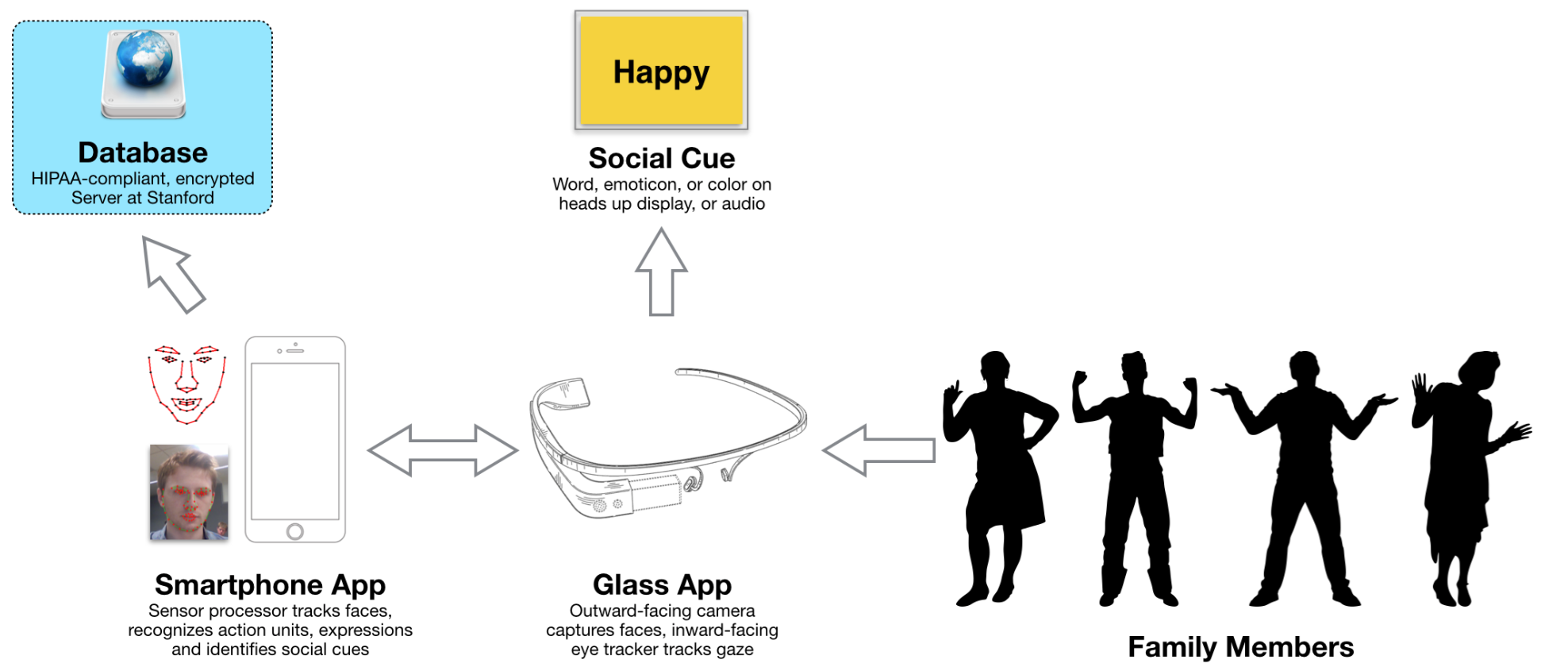

In a small office buried inside an administrative building at Stanford, Catalin Voss and Nick Haber are pairing face-tracking technology with machine learning to build at-home treatments for autism. The Autism Glass Project, a part of the Wall Lab in the Stanford School of Medicine, launches the second phase of its study Monday morning.

The software uses machine learning for feature extraction, to detect what Voss calls ‘action units’ from faces.

The project’s second phase is a 100-child study to investigate the system’s viability as an at-home autism treatment. The Autism Glass Project’s software classifies emotions in faces that the device is pointed at, and instantly gives users a read on the face’s expression.

Using images to translate emotions for children is only the first hurdle to cross, though. The bigger issue the team faced was ensuring the device’s use led to measurable learning when the children were no longer on the device.

“We didn’t want this to be a prosthesis,” emphasized Haber.

In an effort to understand this off-device learning, the team launched the project’s first phase last year, which involved 40 studies and was conducted in the lab. Initially, the study was constrained because the Wall Lab only had access to one Google Glass device, but this changed when Google donated 35 more devices and the Packard Foundation donated a $379,408 grant to the project in early June this year.

![Screen_Shot_2014-09-15_at_5.27.53_PM[1]](https://techcrunch.com/wp-content/uploads/2015/10/screen_shot_2014-09-15_at_5-27-53_pm1.png)

After studying the interaction between children and computer screens, the team set out to design a second phase that would allow children to “interact with their surrounding,” Voss said. The team settled on a game developed by MIT’s Media Lab called “Capture the Smile.”

In the game, children wear Glass and search for individuals with a specific emotion on their faces. By monitoring performance in this game as well as combining video analysis and questionnaires, ‘quantitative phenotype’ of Autism can be built for each study participant, providing a mathematical observation of the physical manifestations of their autism.

The team monitors performance in the game and combines their analysis with video analysis and parental questionnaires to build a ‘quantitative phenotype’ of Autism for each participant in the study. By tracking this over time, the team can show how their device helps improve emotion recognition over the long term.

The second phase of the study will last several months, and the Autism Glass Project’s unique technology will allow increased parental involvement in the treatment process.

“You can start to ask questions, of the percentage of time that the child is talking to his mom, how much is the child looking at her?” said Voss.

Although the study only requires children to wear the Google Glass device for three 20-minute periods each day, analyzing what the children are looking at will help the Wall Lab’s researchers develop a better understanding of how visual engagement plays a role in the emotion detection process.

As massive as the second phase of the project is, it is only the first of many steps for Voss and his team. Wall explained the researchers need to gather clinical data and obtain a code from the American Medical Association before the therapy can become reimbursable, and therefore widespread.

Wall hopes that if the technology is validated for widespread clinical use, the team will achieve his goal of widening the bottleneck for autism treatment. Although the current study only has 100 participants, future work will allow for the team to improve their software and techniques with an ever-widening data set that will be the first of its kind. The project is accepting applications to join the program through their website starting today.

Though the technology may not be widespread yet, it already has been featured in a book series about an autistic superhero. In the second book in the “Trueman Bradley” series by Alexei Russell, the protagonist receives a set of glasses that let him ‘see’ emotions – a powerful tribute to Voss, Haber, and team.

“If you think about it,” Voss said, “what we’re doing is giving children with autism superpowers.”

Comment