Don’t call it Force Touch — Apple’s new flagship feature 3D Touch is a completely different software implementation of the technology that is already available on the Apple Watch and the new MacBook trackpad. 3D Touch is by far the most important improvement in the new iPhone 6s and iPhone 6s Plus. It’s going to change the way we interact with our phones.

But first, let’s talk about what 3D Touch is and what it can do. Phil Schiller and Craig Federighi called it “the next generation of multi-touch.” While this is a hyperbolic marketing statement, it is somewhat true.

With your current phone, you can tap, swipe and pinch on your screen. These three gestures alone were already huge as they changed the way designers worked — you can’t design a website like an app. Over the years, many clever gestures appeared to make you do stuff faster.

For example, Loren Brichter introduced pull-to-refresh in feed-based apps with his Twitter client, Tweetie 2.0. Path 2.0 introduced clever floating shortcuts. Facebook invented the hamburger menu that is now slowly going out of fashion. Snapchat made swipeable menus popular. Mailbox, Tinder and so many other apps invented neat little ways to replace simple on-screen buttons.

All these innovations were pushing mobile design forward along with OS-based gestures, such as Control Center and the Notifications screen. But how do you go to the next level and invent new ways to be more productive on your phone? It turns out the answer was right in front of us all along. Even though a pinch and a swipe are very different, these are all 2D-based gestures.

Apple renamed Force Touch into 3D Touch for this exact reason — the company just upgraded the iPhone’s display with a third dimension when it comes to user experience. This might be one of iOS 9’s most important features, and we just saw it a week before iOS 9 is going to be released on September 16.

As always, Apple is leading by example and showing what you can do with this entirely new field of gestures. At first, app developers are going to mimic Apple’s gestures, then they are going to invent brand new ways and thrive with innovative and clever gestures.

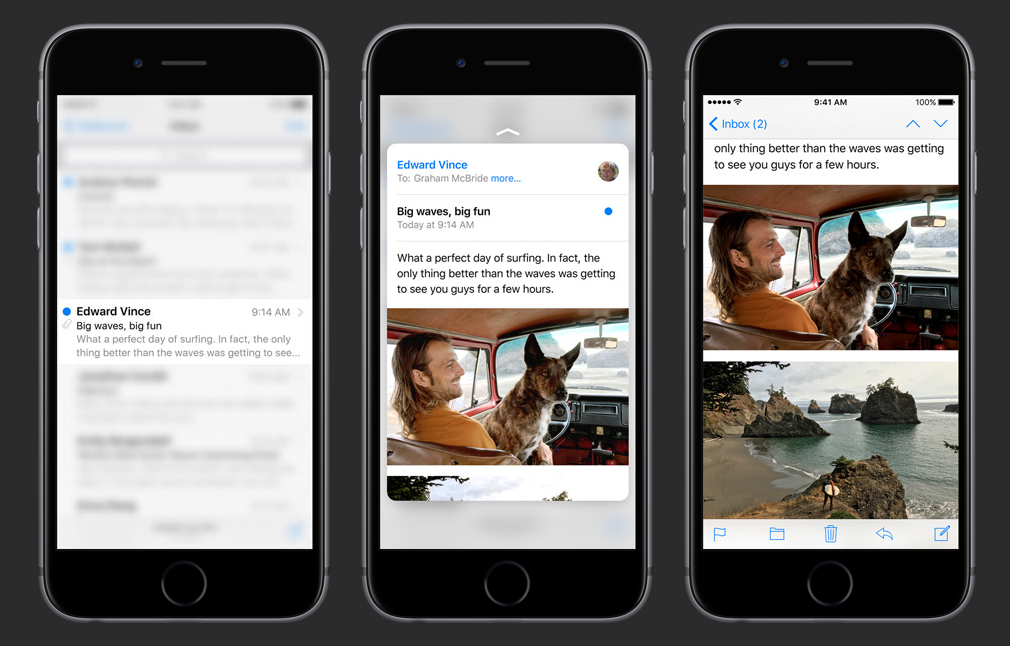

So here’s what we saw on stage at Apple’s event. The most important 3D Touch use case lets you peek into content — this way, you can preview an email, a photo, a link, an address, a message and go right back to where you were. It saves you a couple of taps and breaks the traditional tree hierarchy. In many ways, this feature is reminiscent of Quick Look on OS X.

When you are done peeking, you have three options. You can press a little deeper to actually go into this email, message or calendar view. You can remove your finger and go back to your feed, email list or camera view.

More interesting, you can also swipe up a little to load a few actions. For example, it’s a good way to flag an email or forward it. This smooth gesture makes sense on a touch screen as you’d rather swipe your finger around the display than load an email thread, look for the menu, press it, find the forward button and hit it.

Another way to know that 3D Touch is an important design innovation, this option menu that you access by peeking into some content and swiping up is basically what Force Touch is on the Apple Watch — and just that. 3D Touch lets you do so much more on the iPhone.

Apple also implemented 3D Touch-based home screen shortcuts. Let’s say you want to call one of your favorite contact. You can deep press on the Phone icon and find your favorite contact in a pop-up menu. Press your contact’s name and you’re done. For tab-based apps, it can be a nice shortcut as you can go directly to the right tab. In other words, it saves you a tap.

Finally, there are operating system-level gestures as well. You can press from the left side of the home screen to load the multitasking view, or press hard on the keyboard to turn it into a trackpad to move your cursor around.

All of these gestures work in conjunction with Apple’s Taptic Engine to provide you with haptic feedback. The screen won’t move, but you will get feedback from your phone.

But this is just part of the story as developers are going to take advantage of that as well. We saw a few third-party examples on stage, such as Instagram. In Instagram, you can press on the home screen icon to go directly to the activity tab. You can also press on a photo when you’re looking at a grid view to expand a particular Instagram post.

These are some nice day one examples, but I can’t wait to see game developers, drawing apps and photo editing apps take advantage of 3D Touch. Now, there are a few unanswered questions as well.

As 3D Touch will only be available on the iPhone 6s and iPhone 6s Plus, very few people will get the new feature at first. iPad and old iPhone users don’t want to be left out — and don’t forget that the iPhone 6 and 5s are still on sale. That’s why developers need to keep in mind that 3D Touch needs to remain optional for the next few years until the rest of the lineup gets an upgrade.

It’s unclear whether Android OEMs are going to copy Apple on this feature, but multi-platform app developers will also have to keep in mind that the Android version of their apps won’t get pressure-based gestures any time soon.

And yet, I can’t help but think that 3D Touch is one of the biggest mobile user experience innovations of the decade. Other phone manufacturers have experimented with clickable displays, but only Apple can pull it off.

Apple is going to sell tens of millions of iPhone 6s, creating a healthy user base of 3D Touch users. But 3D Touch isn’t obvious — there isn’t any on-screen buttons to show you what you can do by pressing on your screen.

The company also has one of the best launching platforms in the world with its keynotes. Millions of people are watching, reading and following these keynotes. They are learning how you’re supposed to interact with your phone thanks to these carefully crafted presentations. On iPhone 6s launch day, millions of people will already know what you can do with 3D Touch. And those who don’t will have friends who do.

But will these new iPhone 6s owners want to use 3D Touch? Yes, they will. For the same reason that you swipe left and right in Tinder instead of pressing the like and dislike buttons, you’ll want a faster way to get things done. I think it’s going to require a bit of adaptation as many people will long touch instead of pressing.

Eventually, it’s going to become as effortless as looking at your notifications. 3D Touch isn’t a nice addition for power users, this is a mainstream feature.

[tc_aol_on code=”519068925″]

Comment