Jim Hunter

More posts from Jim Hunter

I received a lot of feedback on an article I wrote a few months ago about changing the way we perceive the “Things” in the Internet of Things (IoT).

The gist of my argument was that we should start treating these “Things” more like people — not in the sense of giving them the right to vote and the responsibility of paying taxes, but in the sense of thinking about them the way you would think about an employee hired to fulfill a specific function. Our perception of smart “Things” needs to be “people-ified,” if you will.

I thought it would be nice to follow up on that notion and formalize what, exactly, one of these “Things” comprises. After all, there are a lot of things in the world, objects too numerous to count, but not all of these things are (or should be, or ever will be) IoT “Things.”

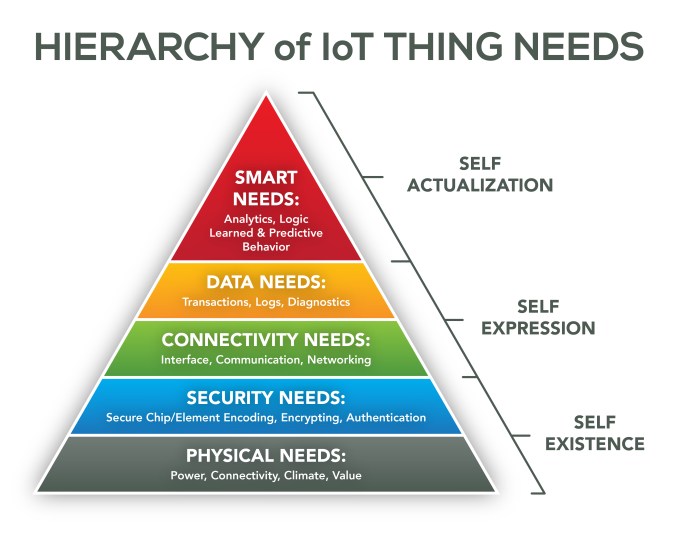

To define an IoT “Thing,” I’m going to employ Maslow’s hierarchy of needs — that well-known human psychology paradigm typically displayed in the shape of a pyramid, with the most fundamental human needs (physiological needs like air, food and water) at the bottom and rising to the most esoteric needs (self-actualization or expression of full potential) at the apex.

I’ve sketched out a derivative needs pyramid for IoT “Things,” charting their ascent to Thing-actualization.

Maslow’s theory suggests that if the basic lower levels are not met, a human being will have no desire for the higher levels. I suggest something similar applies to “Thing” needs. That is, a “Thing” has no use for the higher level if the lower-level building block is not first met.

At the very base of the pyramid are the most basic-existence needs of a “Thing.” Obviously, it will need power, a physical mechanism for connecting and transmitting (such as a radio) and a material housing for its functionality.

But for IoT, we also need to account for its interaction with its environment — the physical conditions in which the “Thing” will be operating. Is it a sensor for an arctic ice-monitoring project that will be operating in subfreezing temperatures? Is it a wristband activity-and-exercise tracker that should be able to withstand sports impact and jostling, human sweat and rapid changes in body temperature? Should it be waterproof? Heat resistant? Encased in lightweight fabric or titanium?

Finally, since the “Thing” is a thing, it must meet a specific need or bring a value to be useful. As such, we must also account for its ability to meet functional expectations as a core existence requirement.

Once the core physical needs of “Things” are met, and before external connectivity is possible, security is needed. To be quite clear: Security is key for IoT adoption, and thus needs to be addressed for individual “Things” that can be externally accessible.

Accessibility does not mean just connectivity. It also applies to things that can be physically “cracked” open, where lack of security could put stored data at risk.

As we’ve all learned by now, when it comes to anything Internet-related, whatever can be exploited, will be exploited. This truth really has to be faced early on in the creation of each IoT device. Every “Thing” in IoT requires a means to encode, encrypt and authenticate its data.

With the security challenges met, the next layer of the pyramid concerns communication needs. Though we noted that a “Thing” needs a physical mechanism for connection as part of its physical needs, this layer addresses the self-expression realm of need.

In short, this layer pinpoints whatever it takes for a “Thing” to share its voice with the world. The specifics of interface and networking are addressed in this layer. What protocols will the “Thing” use for its transport and network layers? IEEE 802.15.4? 6LoWPAN? The protocol and language that the “Thing” will speak are addressed at this level, as well. The communication needs supply the “Thing” with its link to the “I” part of the IoT equation.

The next step up in our needs hierarchy pertains to data. Here is where you decide how the “Thing” will handle its collected information. What transactions does it perform? How does it log data? Does it perform diagnostics? What is the function of the data being collected and how is that expressed by the “Thing”?

At the very top of our pyramid, we find smart needs — the equivalent to Maslow’s self-actualization. Here is where the needs of the “Thing” become an expression, not just of a single sensor or communication gateway, but of those combined constructive properties that make the “Thing” useful for the Internet of Things.

Does it contribute to analytics and exhibit logic? Does it present learned and predictive behavior? Is it scalable and self-configuring, operating without the need for human intervention? While it’s not necessary that the “Thing” pass the Turing test, this realm is where it exhibits its true nature.

What I find interesting about this construct is that while its initial scope was that of an individual IoT “Thing,” it is not limited to a single thing.

Consider that individual people join to form committees and organizations, where the new group becomes its own individual entity. In much the same way, a single thing will combine with other things to create groups and networks of things that are regarded as other more “complex” things.

These combined things will have their own needs that can be defined by this construct, as well. I especially like this Seussian micro to macro perspective when thinking about both things and the data that they collect and evolve into information.

The reason to think this way is to enable the use of familiar paradigms when “Thing” architecture and interaction models are designed. For example, consider this simple question: “What should you consider when purchasing an IoT thing?” With this new thinking, the answer becomes: “The same stuff you consider when you hire a new employee.” Trustworthiness, reliability and ability to work well with others form a great basis for consideration in both cases.

As you “people-ify” things, notice how the perspective shift opens a world of paradigms to leverage.

Comment