Hewlett Packard Enterprise is sending a supercomputer to the International Space Station aboard SpaceX’s next resupply mission for NASA, which is currently set to launch Monday.

Officially named the “Spaceborne Computer,” the Linux-based supercomputer is designed to serve in a one year experiment conducted by NASA and HPE to find out if high performance computing hardware, with no hardware customization or modification, can survive and operate in outer space conditions for a full year – the length of time, not coincidentally, it’ll likely take for a crewed spacecraft to make the trip to Mars.Typically, computers used on the ISS have to be “hardened,” explained Dr. Mark Fernandez, who led the effort on the HPE side as lead payload engineer. This process involves extensive hardware modifications made to the high-performance computing (HPC) device, which incur a lot of additional cost, time and effort. One unfortunate result of the need for this physical ruggedization process is that HPCs used in space are often generations behind those used on Earth, and that means a lot of advanced computing tasks end up being shuttled off the ISS to Earth, with the results then round-tripped back to astronaut scientists in space.

This works for now, because communication is near-instantaneous between low-Earth orbit, where the ISS resides, and Earth itself. But once you get further out – as far out as Mars, say – communications could take up to 20 minutes between Earth and spaceship staff. If you saw The Martian, you know how significant a delay of that magnitude can be.

“Suppose there’s some critical computations that need to be made, on the mission to Mars or when we arrive at Mars, we really need to plan ahead and make sure that that computational capacity is available to them, and it’s not three- or five-year old technology,” Dr. Fernandez told me. “We want to be able to get to them the latest and greatest technology.”

This one-year experiment will help Dr. Fernandez hopefully show that in place of hardware modifications, a supercomputer can be “software hardened” to withstand the rigors of space, including temperature fluctuations and exposure to radiation. That software hardening involves making adjustments on the fly to things like processor speed and memory refresh rate in order to correct for detected errors and guarantee correct results.

“All of our modern computers have hardware built error correction and detection in them, and it’s possible that if we give those units enough time, they can correct those errors and we can move on,” Dr. Fernandez said.

Already on Earth, the control systems used to benchmark the two experimental supercomputers sent to the ISS have demonstrated that this works – a recent lightning storm struck the data center where they’re stationed, causing a power outage and temperature fluctuations, which did not impact the results coming from the HPCs. Dr. Fernandez says that’s a promising sign for how their experimental counterparts will perform in space, but the experiment will still help show how they can react to things you can’t test as accurately on Earth, like exposure to space-based radiation.

And while the long-term goal is to make this technology useful in an eventual mission to Mars, in the near-term it has plenty of potential to make an impact on how research is conducted on the ISS itself.

“One of the things that’s popular today is ‘Let’s move the compute to the data, instead of moving the data to the compute,’ because we’re having a data explosion,” Dr. Fernandez explained. “Well that’s occurring elsewhere as well. The ISS is bringing on board more and more experiments. Today those scientists know how to analyze the data on Earth, and they want to send the data down to Earth. It’s not a Mars-type latency, but still you’ve got to get your data, got to process it and got to get back and change your experimental parameters. Suppose, like at every other national lab in the nation, the computer was right down the office from you.”

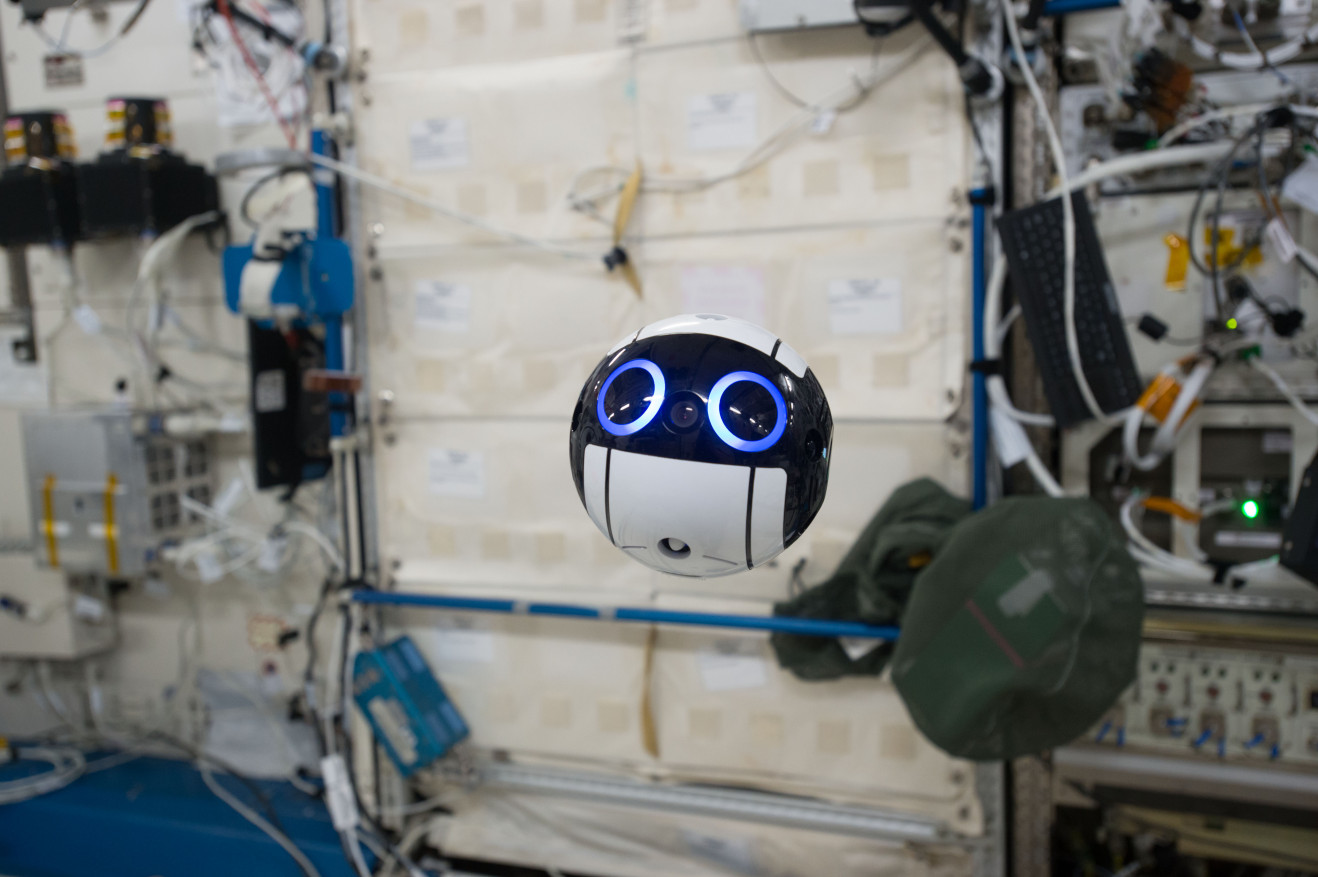

Local supercomputing capability isn’t just a convenience feature; time on the ISS is a precious resource, and any thing that makes researchers more efficient has a tremendous impact on the science that can be done during missions. Japan’s JAXA space agency recently started testing an automated camera drone on board for just this purpose, for instance, since not having to hold a camera means researchers can spend more time on actual experimentation. For HPC, this could have an even larger impact in a couple of different ways.

“You do your post-processing right down the hall on the ISS, you’ve saved time, your science is better, faster and you can get more work out of the same amount of experimental time,” Dr. Fernandez said. Secondly, and more important to me, the network between the ISS and Earth is precious, and it’s allocated by experiment. If I can get some people to get off the network, that will free up bandwidth for people who really need the network.”

In the end, Dr. Fernandez is hoping this experiment opens the door for future testing of other advanced computing techniques in space, including Memory-Driven computing. He also hopes it opens the door for NASA to consider making use of the same sort of system on future Mars missions, to help with the experimentation potential of those journeys, and to help improve their chance of success. But in general, Dr. Fernandez says, he’s just hoping to contribute to the work done by those advancing various fields of research in space.

“I want to help all the scientists on board, and that’s one of the dreams of this experiment,” he said.

Comment