Cruise, the self-driving startup acquired by GM last year, is already operating a complete autonomous ride-hailing service in San Francisco for its employees.

The service is called “Cruise Anywhere,” and it allows employees to use a smartphone app to get anywhere they need to go in SF, seven days a week.Cruise Anywhere is in beta, hence the employee-only restriction, but the company says that some employees are already using it as their primary source of transportation, replacing either personal vehicle ownership, public transit or traditional ride-hailing services completely. In total, Cruise says 10 percent of its SF employees are using the beta, and more are being enrolled each week with a waitlist currently in place.

“We’ve always said we’d launch first with a rideshare application, and this is in line with that and just further evidence of that,” said Cruise CEO and co-founder Kyle Vogt in an interview. “We’re really excited about how the technology is evolving, and the rate at which it’s evolving. This is a manifestation of that — putting the app in people’s hands and having them use it for the first time and make AVs their primary form of transportation.”

During testing in San Francisco, Cruise has revealed in videos that an app is used to call its prototype AVs. But the company is focused on developing the hailing component as a full-fledged service itself, not just for testing purposes. The aim is to create something that can stand on its own, as Cruise believes that all aspects of the self-driving experience are important to differentiating one autonomous technology provider from another.

“We see a future where we’re open to partnering with one network or partner, many partners or even no partners if that’s the best way to release this technology and achieve the societal benefits of driverless cars sooner,” Vogt explained, noting that focusing on all aspects of the service gives them more flexibility with a go-to-market strategy.

Cruise employees are able to use the Cruise Anywhere services between 16 and 24 hours per day depending on availability of the fleet that Cruise operates in SF, and this pool of vehicles is set to grow by more than 100 cars in the next couple of months, which should expand operational hours. It’s available across all of the mapped area of San Francisco where the test fleet operates, and the app works like any ridesharing experience, mapping ride requesters with available cars.

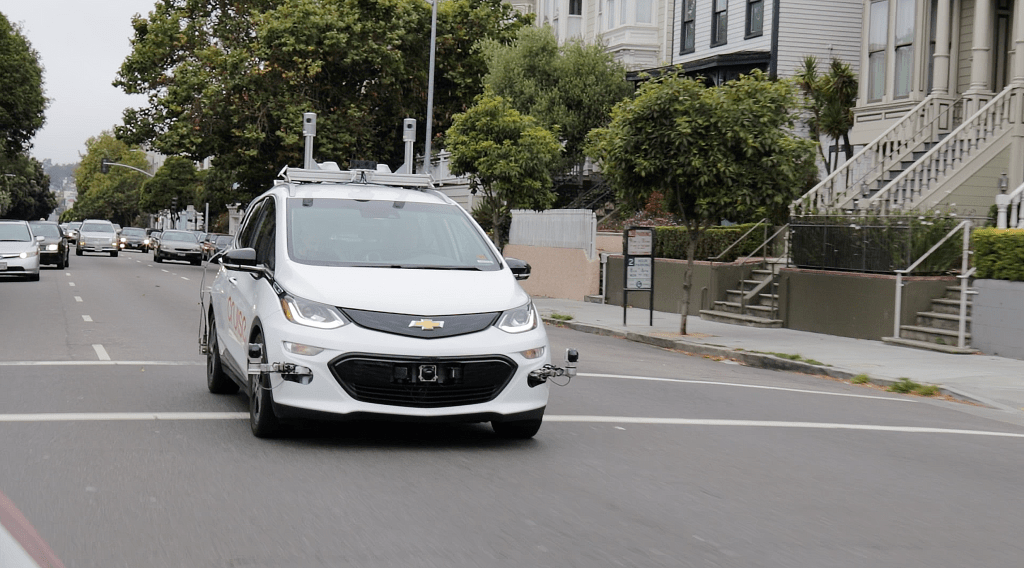

The cars themselves are modified Chevrolet Bolt EVs equipped with sensors and self-driving computers and software, and each also has a safety driver in place behind the wheel for testing and as required by law. Still, Cruise says those drivers have had to take over manual control of vehicles engaged in Cruise Anywhere service only on a few occasions, with the vast majority of the driving done autonomously.

One Cruise employee has already taken more than 60 rides using Cruise Anywhere over the past three weeks or so, and uses it for everything from running errands to going out to drinks. The goal, again, is to build a user experience ready for consumer use — though Cruise isn’t saying if or when it might open it to members of the general public, or expand it to other cities where it’s conducting AV testing.

This latest move by Cruise is definitely a big step — its autonomous technology is now getting road ready for actual commercial service deployments. Competitor Waymo has also debuted an autonomous ride-hailing trial in Chandler, Arizona, with public applications for membership welcome, but Cruise’s service so far seems the broadest in terms of service area and availability based on known information.

Comment